Introduction

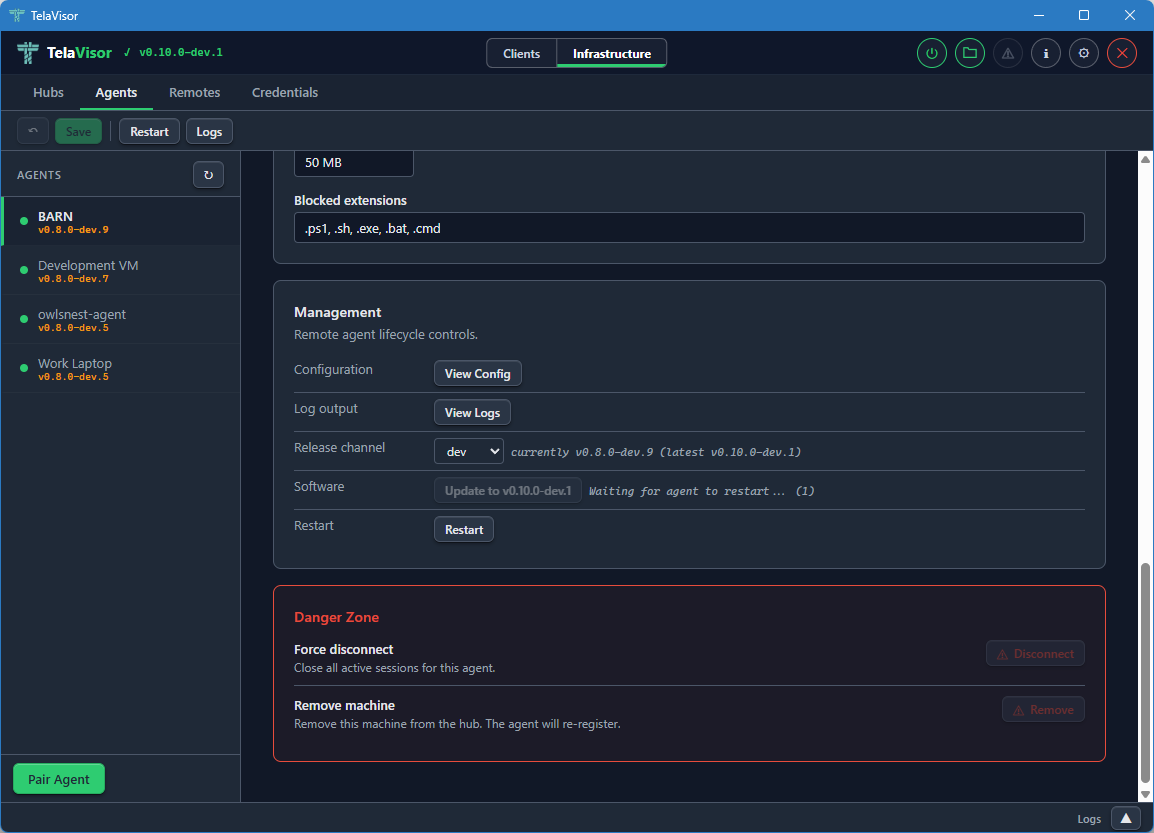

This edition documents Tela v0.16.0-dev.6.

Tela is a connectivity fabric. It is a small set of programs that lets one machine reach a TCP service on another machine through an encrypted tunnel, without either side opening an inbound port, installing a virtual private network (VPN) client, loading a kernel driver, or running anything as root or Administrator. Remote desktop is the use case I built it for first. Remote desktop is just one application that runs on the fabric, not the point of it.

The point of the fabric is that the same three pieces scale from a single

laptop reaching a single home server all the way up to a fleet of machines

managed by a team, and the scaling does not require switching tools or

rearchitecting anything. The pieces are an agent (telad) that runs on the

machine you want to reach, a hub (telahubd) that brokers connections, and

a client (tela) that runs on the machine you want to reach from. Each is

a single static binary with no runtime dependencies. They run on Windows,

Linux, and macOS.

| Tier | What it looks like |

|---|---|

| Solo remote access | One agent, one hub, one client. A few minutes from download to first connection. |

| Personal cloud | Several agents at home and work, file sharing, a desktop client for non-terminal users. |

| Team cloud | Named identities, per-machine permissions, pairing codes for onboarding, audit history, remote admin from the desktop client. |

| Fleet | Multiple hubs registered with a directory, identities and permissions managed centrally, agents updating themselves through release channels. |

The next chapter, What Tela is, covers the substrate properties, the design tradeoffs against existing tools, and the things Tela is explicitly not trying to be. It is the concept primer. After that, Installation and First connection get you to a working tunnel.

How this book is organized

Tela is the substrate. This book documents the substrate first and the features built on top of it second.

- The Getting Started section is a fast path from "I have never heard of Tela" to "I have a working tunnel."

- The User Guide section is the reference for the three binaries, the configuration files, and the desktop and portal clients.

- The How-to Guides section is a set of focused walkthroughs for the most common operational tasks.

- The Use Cases section walks through six concrete deployment scenarios with the access model and the deployment pattern for each.

- The Operations section covers the release process for hub and agent operators.

- The Design Rationale section answers the why questions: why three small daemons rather than one, why the hub is a blind relay, why remote administration works through the hub rather than directly, why file sharing has its own dedicated protocol, and why the gateway is a primitive that recurs at four layers. Read it after the body of the book if you want to understand the project's design decisions and the alternatives that were considered and rejected.

- The Appendices collect reference data: the CLI reference (A), the configuration file reference (B), the access model definition (C), the portal wire protocol (D), the Tela Design Language (E), and the glossary (F). Use them as lookups, not as reading.

The book tracks the stable release. It is updated as part of the stable promotion process, so the version shown at the top of this page matches the binaries on the stable channel.

Conventions

- The three binaries are

tela(client),telad(agent or daemon), andtelahubd(hub or relay). - "TelaVisor" is the desktop graphical interface built on top of

tela. - A "group" is one hub and the agents connected to it -- the basic operational unit. A "fleet" is a collection of groups. The analogy is a carrier battle group (hub as carrier, agents as support vessels) within a fleet.

- A "hub directory" is anything that responds to the small Tela directory protocol; a "portal" is a directory plus extras (dashboard, identity, audit). See Hub directories and portals.

- Code, file paths, command-line flags, and configuration keys are in

monospace. - Mermaid diagrams render natively in the HTML output.

License

Apache License 2.0. See the LICENSE file in the repository.

What Tela is

As mentioned in the introduction, Tela is a connectivity fabric. The basic operational unit in Tela is a group: one hub and all the agents connected to it. A collection of groups is a fleet.

What it solves

The classic remote-access problem looks like this. You have a machine somewhere: a workstation, a server, a Supervisory Control and Data Acquisition (SCADA) gateway, a Raspberry Pi. You want to reach a service on it. Secure Shell (SSH), Remote Desktop Protocol (RDP), PostgreSQL, an HTTP application programming interface (API), a Server Message Block (SMB) share, anything that speaks TCP. You are not on the same network. There is a firewall in the way. You don't control the firewall. You can't open inbound ports. You don't want a vendor-locked cloud service. You don't want a kernel-mode VPN that requires admin rights to install.

Most existing solutions force a tradeoff:

| Solution | The tax |

|---|---|

| Traditional VPN | Admin to install on the client, inbound firewall rules on the server, often a kernel driver. |

| SSH port forwarding | Requires SSH access to a publicly reachable jump host. |

| Vendor cloud services (TeamViewer, AnyDesk) | Opaque agent, per-seat pricing, lock-in. |

| Kernel-mode WireGuard | CAP_NET_ADMIN or root, plus a TUN device and inbound firewall rules. |

| Mesh VPN (Tailscale, Nebula, ZeroTier) | TUN device, vendor agent, often blocked on managed corporate endpoints. |

Tela takes the security guarantees of WireGuard and removes the deployment friction.

What makes Tela different

A handful of properties define the design and run through every chapter of this book.

Outbound-only on both ends

The agent and the client both make outbound connections to the hub. Neither needs an inbound firewall rule, port forwarding, dynamic Domain Name System (DNS), or a static internet protocol (IP) address. The hub is the only component that needs a public address, and it only needs one inbound TCP port.

No kernel driver, no admin rights

Tela runs WireGuard entirely in userspace through gVisor's network stack. There is no TUN device, no kernel module, and no Administrator or root requirement on either the agent or the client. This is the property that lets Tela work on a managed corporate laptop where you cannot install a VPN, and on a locked-down server where you cannot load drivers.

The hub is a blind relay

All encryption is end to end between the agent and the client. The hub forwards opaque WireGuard ciphertext and cannot read session contents. A compromised hub leaks metadata, not data.

Any TCP service

Tela tunnels arbitrary TCP. SSH, RDP, HTTP, PostgreSQL, SMB, Virtual Network Computing (VNC), or anything else that runs over TCP travels through the same tunnel without the hub having to understand the protocol.

Three transports, automatic fallback

The fabric tries direct peer-to-peer first, falls back to a User Datagram Protocol (UDP) relay through the hub, and falls back again to a WebSocket relay over Transport Layer Security (TLS). Whichever transport is active, the WireGuard payload is the same and the hub still cannot decrypt it.

One binary per role, no runtime dependencies

tela, telad, and telahubd are each a single executable. There is no

installer, no package to register with the operating system unless you

choose to run them as services, and no shared library to deploy alongside

them.

What grows on top of the fabric

Connectivity is the substrate. Everything else in this book is something the project has built on top of it, in the same repository, with the same release process.

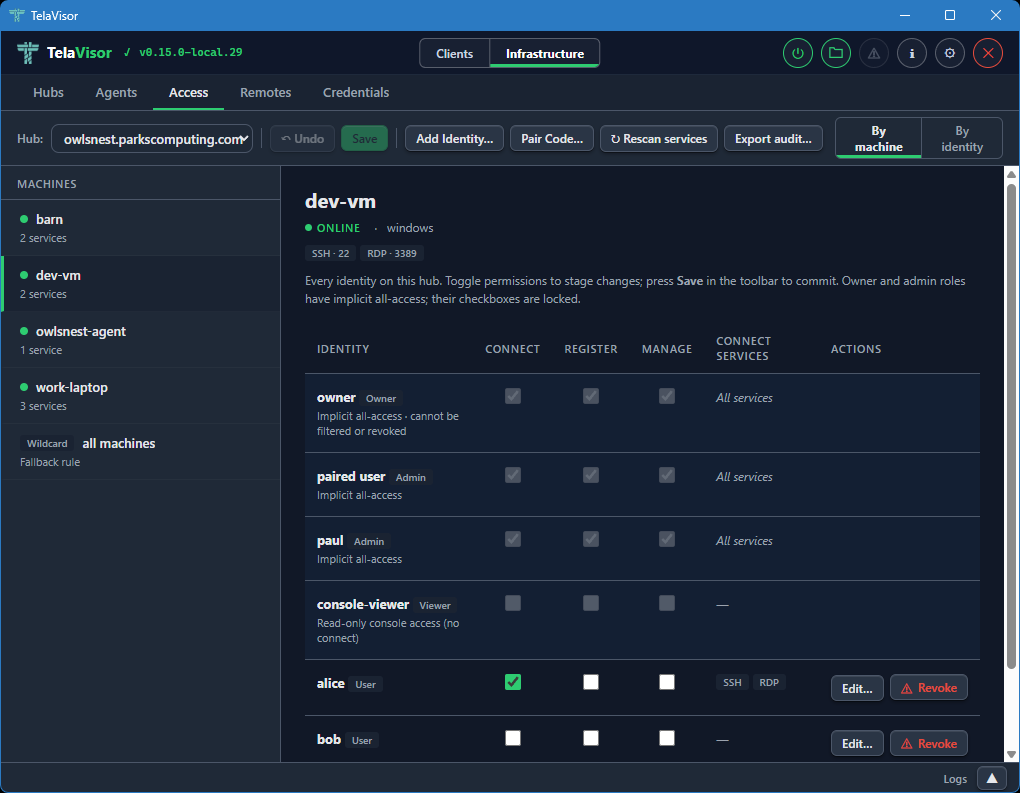

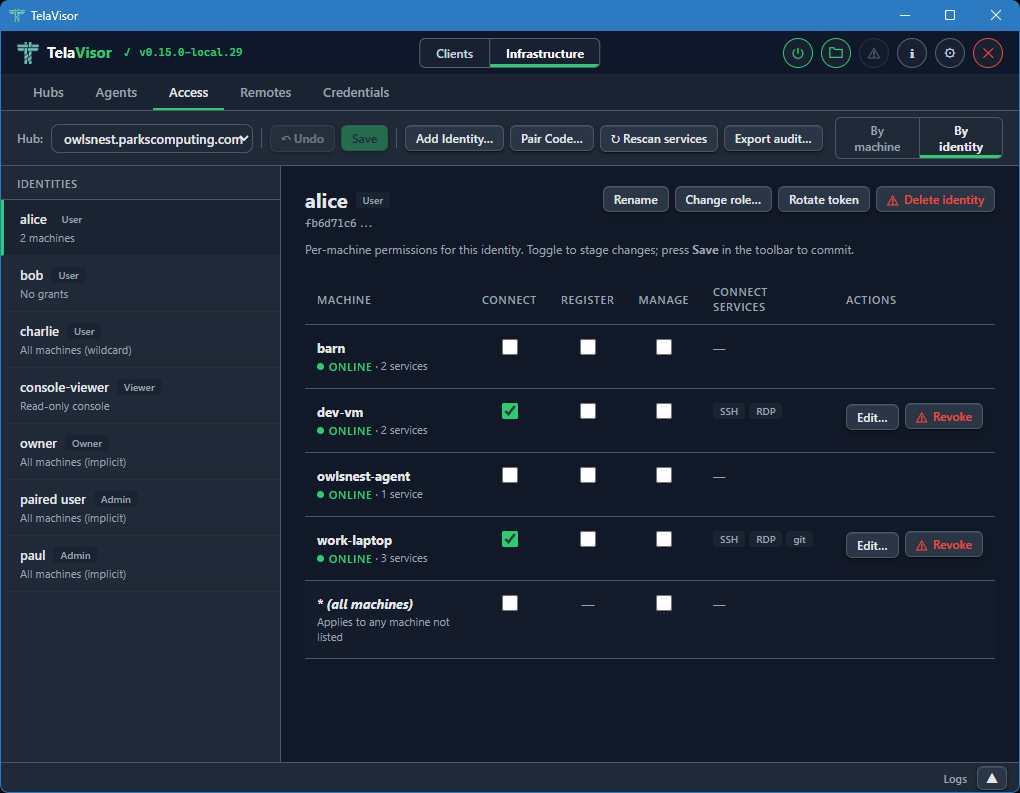

- Token-based access control with four roles (owner, admin, user, viewer) and per-machine permissions for register, connect, and manage.

- One-time pairing codes that replace 64-character hex tokens for onboarding new users and new agents.

- Remote administration of agents and hubs through the same wire as data traffic, so you do not need shell access to the host running an agent or a hub to manage it.

- File sharing through a sandboxed directory on each agent, with upload, download, rename, move, and delete operations available from the command line, the desktop client, or a Web Distributed Authoring and Versioning (WebDAV) mount.

- Gateways, a family of forwarding primitives that Tela uses at several layers of the stack: a path-based HTTP reverse proxy in the agent for routing one tunnel port to several local services, a bridge-mode agent for fronting services on other LAN-reachable machines, outbound dependency rerouting for service-to-service calls, and the hub itself as a relay gateway for opaque WireGuard ciphertext between a client and an agent. They share one rule: forward without inspecting beyond what the layer requires. The 1.0 roadmap extends the family with a multi-hop relay gateway that bridges sessions across more than one hub.

- TelaVisor, a desktop graphical interface that wraps the client and exposes the management features without requiring terminal access.

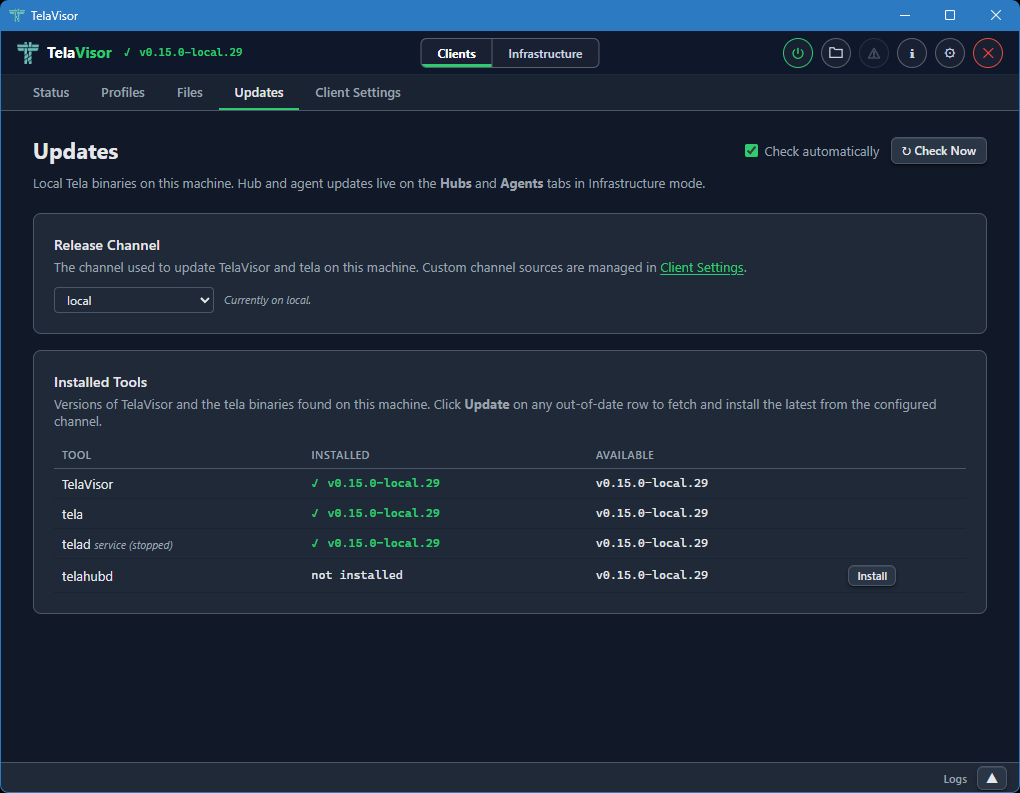

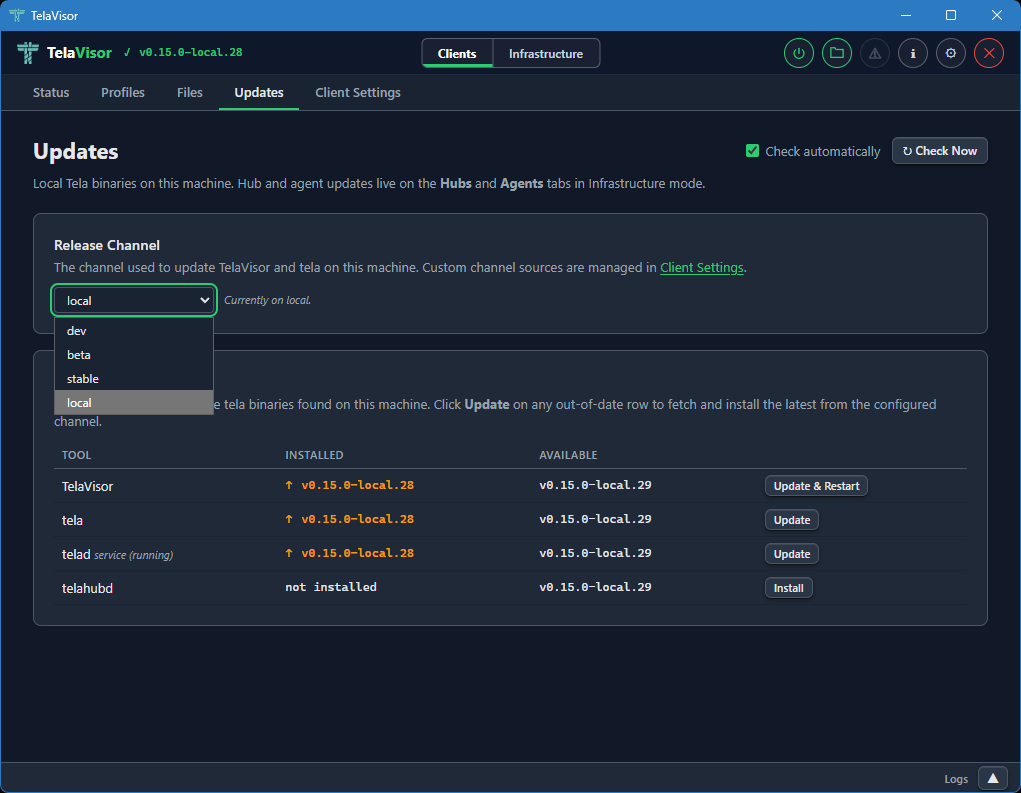

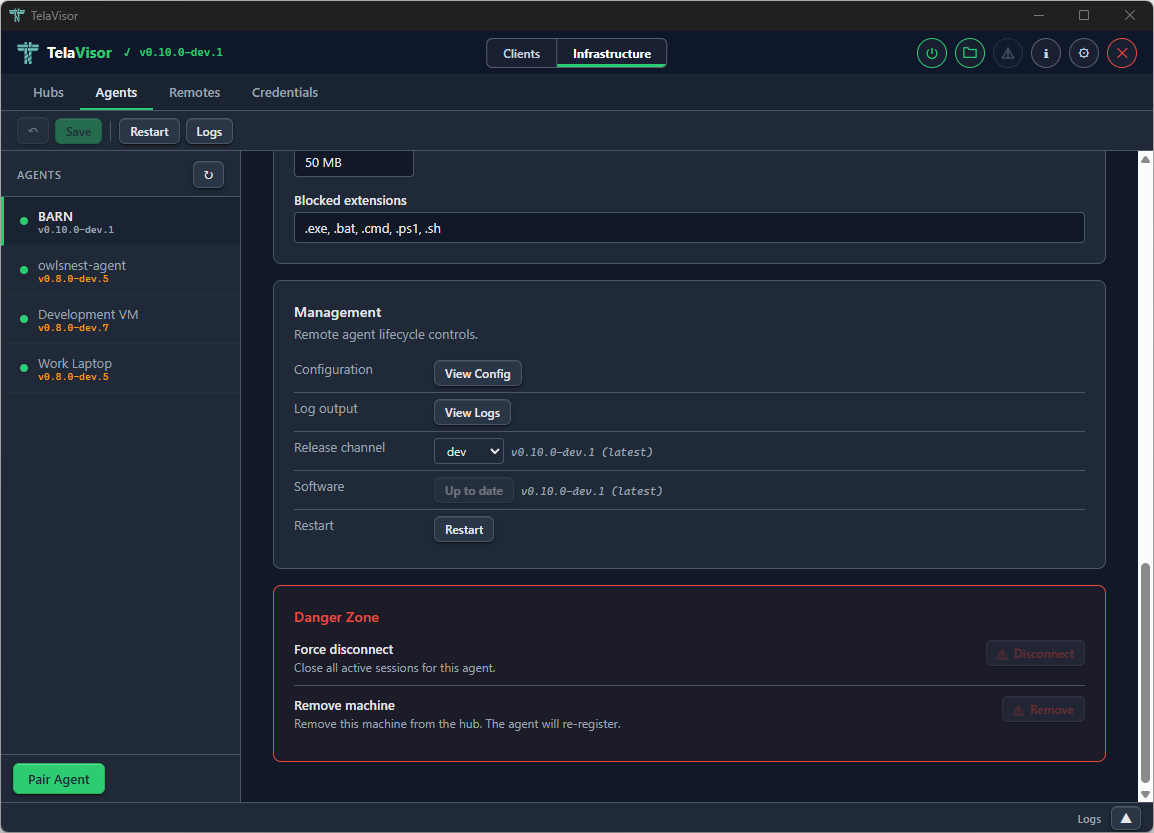

- Self-update through release channels (dev, beta, stable) with signed manifests, so every binary can update itself in place without an external package manager.

- A hub directory protocol that lets a portal list and discover hubs.

These features are not bolted on. They share the protocol, the access model, the configuration system, and the release pipeline of the fabric itself.

What it is not

The word fabric invites projection, so a few explicit non-goals are worth naming up front.

- Not a mesh VPN. There is no overlay network with auto-discovery and no agent-to-agent routing as a first-class feature. You connect to one machine at a time. See A note on the word fabric below.

- Not a multi-tenant SaaS. You run the hub yourself. A portal can aggregate multiple hubs, but each hub still runs under its own operator's control.

- Not a transport for arbitrary IP traffic. It tunnels TCP services, one machine at a time. No UDP services, no Internet Control Message Protocol (ICMP), no full-network IP routing.

- Not a replacement for SSH. It is a way to get SSH (or RDP, or PostgreSQL) onto your laptop without configuring port forwarding or VPNs.

The Topology and addressing section in the Networking chapter answers specific questions about IP addressing, clash avoidance, discoverability, ICMP, agent-to-agent routing, and session limits.

Why three binaries

The split is deliberate.

telahubdis the only binary that needs to be publicly reachable. Everything about its job is "be the meeting point." It cannot read what flows through it.teladlives on the machine you want to reach. Its job is to register with a hub and unwrap the encrypted tunnel into a local TCP connection.telalives on the machine you connect from. Its job is to dial a hub, set up the encrypted tunnel, and bind a local TCP listener that forwards through the tunnel.

This is the WireGuard model expressed as three small daemons. The agent and the client are peers. The hub is a router with no keys. The roles map directly to the operational reality: the agent runs as a service on a machine you own and rarely touch, the client runs on demand on a laptop you carry around, the hub runs on a small virtual server with a public address. They have different lifecycles, different threat models, and different update cadences. Bundling them would force shared concerns where there are none.

A note on the word fabric

Tela is a fabric in the leaf-spine sense, not a mesh in the Tailscale sense. The hub is the spine. The agents and clients are the leaves. Most traffic travels client to hub to agent, the same way a leaf-spine data center fabric routes most traffic leaf to spine to leaf. Clients and agents can negotiate direct peer-to-peer connections when the network allows it, but those connections are an optimization, not the default, and they do not turn Tela into a routed mesh in the way that Tailscale, Nebula, or ZeroTier are. If your design requires agent-to-agent routing without the hub on the data path as a first-class feature, that is a property to evaluate carefully against the chapters in the Design Rationale section. The glossary has the longer history of the word and the prior art that justifies it.

For the architectural details, see Why a connectivity fabric. For installation, see Installation.

Installation

Tela ships through three release channels (dev, beta, stable). Once

any one binary is installed, every subsequent update is one command:

tela update

telad update

telahubd update

The bootstrap step is the only one that needs a manual download. Pick whichever channel you want to follow.

Linux / macOS

Pull the latest binary from a channel manifest:

# Replace 'dev' with 'beta' or 'stable' as desired.

# Replace 'tela-linux-amd64' with the binary you want.

curl -fsSL https://github.com/paulmooreparks/tela/releases/download/channels/dev.json \

| python3 -c 'import json,sys; m=json.load(sys.stdin); print(m["downloadBase"]+"tela-linux-amd64")' \

| xargs curl -fLO

chmod +x tela-linux-amd64

sudo mv tela-linux-amd64 /usr/local/bin/tela

For telad and telahubd, repeat with the matching binary name.

Windows

From PowerShell:

$m = Invoke-RestMethod https://github.com/paulmooreparks/tela/releases/download/channels/dev.json

Invoke-WebRequest ($m.downloadBase + 'tela-windows-amd64.exe') -OutFile C:\Users\$env:USERNAME\bin\tela.exe

Make sure C:\Users\<you>\bin is on your PATH.

TelaVisor (desktop GUI)

For Windows, download the NSIS installer from any release page or directly from the channel manifest:

$m = Invoke-RestMethod https://github.com/paulmooreparks/tela/releases/download/channels/dev.json

Invoke-WebRequest ($m.downloadBase + 'telavisor-windows-amd64-setup.exe') -OutFile TelaVisor-Setup.exe

.\TelaVisor-Setup.exe

For Linux, the channel manifest also contains .deb, .rpm, and a bare

binary. For macOS, a .tar.gz of the .app bundle.

Channels

| Channel | What it is | Tag form |

|---|---|---|

dev | Latest unstable build, every commit to main | v0.8.0-dev.42 |

beta | Promoted dev build ready for wider exposure | v0.8.0-beta.3 |

stable | Promoted beta build, the conservative line | v0.8.0, v0.6.1 |

The model is documented in Release process.

Verifying downloads

Every download Tela does internally is SHA-256-verified against the channel

manifest before being installed. If you want to verify a manual download by

hand, every release also publishes a SHA256SUMS.txt asset:

curl -fLO https://github.com/paulmooreparks/tela/releases/download/v0.8.0-dev.8/SHA256SUMS.txt

sha256sum -c SHA256SUMS.txt --ignore-missing

Next steps after downloading

Downloading the binary is the first step, not the last. What to do next depends on which binary you installed:

| Binary | Next step |

|---|---|

telahubd | Follow Run a hub on the public internet. The walkthrough covers picking a deployment model (Caddy, nginx, Apache, Cloudflare Tunnel, or direct), installing the OS service, bootstrapping the owner token, and configuring the reverse proxy. |

telad | Follow Run an agent to register a machine with a hub. |

tela client | Follow First connection to pair with a hub and open your first tunnel. |

| TelaVisor | Launch the app after install; it walks you through pairing on first run. |

After bootstrapping

Every Tela binary has a update subcommand that follows the configured

channel. Once you have one of them installed, you no longer need to think

about manual downloads:

tela update

sudo telad update

sudo telahubd update

To switch channels:

tela channel set beta # client (and TelaVisor)

sudo telad channel set beta # agent (writes to telad.yaml)

sudo telahubd channel set beta # hub (writes to telahubd.yaml)

For a one-shot override that does not persist, pass -channel <name> to

the update subcommand: sudo telahubd update -channel beta. Any valid

channel name works (dev, beta, stable, or a custom channel you have

configured).

For the full picture see the Self-update and release channels how-to.

First connection: hello, hub

Install tela, telad, and telahubd before starting (see Installation). The steps below walk through a minimal three-machine setup: one hub, one agent, one client, ending at a working SSH connection.

For the full CLI reference including all flags and configuration options, see Appendix A: CLI reference.

The scenario

Picture two machines that cannot reach each other directly:

web01is a Linux server sitting on a private network -- a home lab, a cloud VM behind NAT, a machine at a co-location facility, anything that has no publicly accessible inbound port. The nameweb01is just a label we give the machine inside Tela; it can be any string you choose. This is the machine you want to reach. It runstelad, the agent daemon.- Your laptop is wherever you are. It runs

tela, the client. It also cannot accept inbound connections -- it is behind a home router or a corporate firewall.

Because neither machine accepts inbound connections, they cannot talk to each other directly. The hub solves this.

hub.example.comis a small server with a public IP address. It does not need to be powerful -- it only brokers connections, it never decrypts tunnel traffic. It runstelahubd.

Both web01 and your laptop connect outbound to the hub. The hub pairs them together and starts relaying WireGuard packets between them. Once the WireGuard tunnel is up, your laptop can reach any port on web01 as if the two machines were on the same network.

When the walkthrough is done, your laptop will have a local port that reaches web01:

Services available:

localhost:22 → SSH

The port shown is what tela bound on your machine. Use that port whenever you connect to web01.

The three binaries, one on each machine

telahubdonhub.example.com-- the broker. Needs a public IP. Nothing sensitive passes through it in plaintext.teladonweb01-- the agent. Registers the machine with the hub and exposes its ports through the tunnel.telaon your laptop -- the client. Connects to the hub, retrieves the tunnel toweb01, and binds local addresses for each exposed port.

Nothing has to be open inbound on web01 or your laptop.

Step 1: Start the hub

On hub.example.com:

telahubd -port 8080

telahubd listens on port 8080 (HTTP+WebSocket) and 41820 (UDP relay) in this

example. The default is port 80, which requires elevated privileges on Linux;

using a non-privileged port avoids that. Use a real config file with TLS for

anything past a quick test. See

Run a hub on the public internet for the production

walkthrough.

On first start the hub auto-generates an owner token and prints it. Save it somewhere; you will need it for everything below.

The owner token is the highest-privilege credential on the hub -- treat it like a root password. This walkthrough uses it directly for both the agent and the client for simplicity. In a real deployment you would create separate lower-privilege tokens for each: one for the agent (register permission) and one per user (connect permission). See Run a hub on the public internet for the production pattern.

Step 2: Start the agent on web01

On web01:

telad -hub wss://hub.example.com:8080 -machine web01 -token <owner-token> -ports 22

This registers web01 with the hub and tells the hub that the agent will

expose TCP port 22. After a moment, the hub's /api/status endpoint should

list web01 as a registered machine.

Step 3: Connect from your laptop

On your laptop:

tela connect -hub wss://hub.example.com:8080 -machine web01 -token <owner-token>

The client opens a WireGuard tunnel through the hub to web01 and binds

SSH on a deterministic loopback address. The output shows the address:

Services available:

localhost:22 → SSH

Leave it running.

Step 4: SSH

In another terminal, use the port from the output:

ssh -p 22 user@localhost

You're now SSH'd into web01 through an end-to-end encrypted WireGuard

tunnel that the hub never decrypted.

What just happened

sequenceDiagram

participant Laptop as Laptop (tela)

participant Hub as hub.example.com (telahubd)

participant Web01 as web01 (telad)

Web01->>Hub: register web01, expose port 22

Laptop->>Hub: connect to web01

Hub->>Web01: client wants you

Hub-->>Laptop: paired, here's the channel

Laptop->>Web01: WireGuard handshake (E2E)

Note over Laptop,Web01: Hub forwards ciphertext only

Laptop->>Web01: TCP through tunnel (SSH)

The hub paired the two sides and started forwarding WireGuard packets. It

cannot read those packets -- WireGuard's encryption is between the laptop

and web01, with keys neither side ever sent to the hub.

Where to go next

- Run a hub on the public internet for the real production setup with TLS, auth, and a service manager

- Run an agent for the agent's full deployment story

- Run Tela as an OS service to survive reboots without manual restarts

- Self-update and release channels once you have more than one box

- TelaVisor desktop app for a GUI alternative

The three binaries

Tela is built around three cooperating Go binaries. Each one runs from a single static executable with no runtime dependencies.

| Binary | Role | Where it runs |

|---|---|---|

telahubd | Hub relay. Brokers encrypted sessions between agents and clients. Sees only ciphertext. | A publicly reachable server. |

telad | Agent / daemon. Registers a machine with a hub and exposes selected TCP services through the encrypted tunnel. | The machine you want to reach. |

tela | Client CLI. Connects to a machine through a hub and binds services on deterministic loopback addresses through the encrypted tunnel. | Any machine you want to connect from. |

A connection involves all three:

flowchart LR

Client["tela (client)"]

Hub["telahubd (hub)<br/>blind relay: ciphertext only"]

Agent["telad (agent)"]

Service["Local Service"]

Client -- "wss / udp" --> Hub

Agent -- "ws / udp" --> Hub

Agent --> Service

subgraph "WireGuard Tunnel (E2E encrypted)"

direction LR

Client -.-|"Curve25519 + ChaCha20"| Agent

end

Both tela and telad make outbound connections to the hub. Neither side

needs to open inbound ports or configure port forwarding. The hub is the only

component that needs to be publicly reachable.

The hub is a blind relay. It pairs clients with agents and forwards WireGuard packets between them, but it cannot decrypt the contents -- only the agent and the client share the keys. Even if the hub is compromised, session contents are not exposed.

For the full design rationale, see Why a connectivity fabric. For the CLI surface of each binary, see Appendix A: CLI reference.

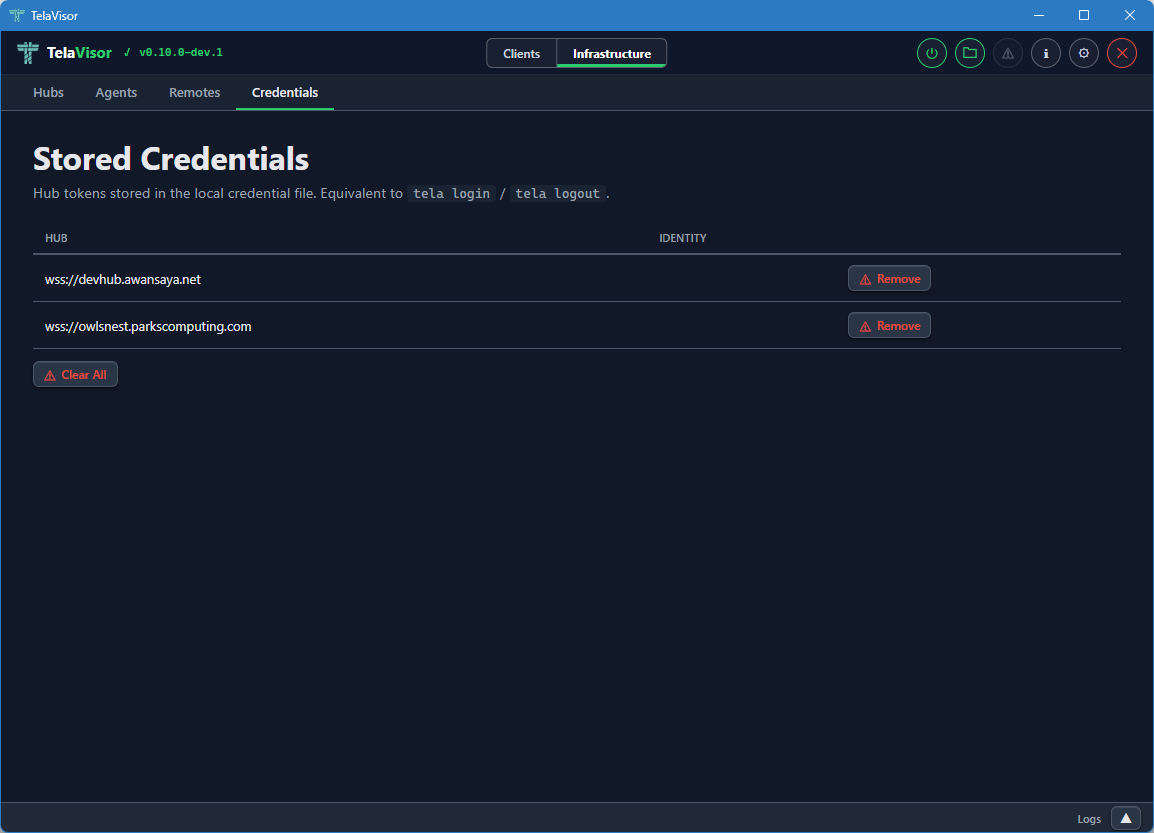

Credentials and pairing

Tela stores hub tokens in a local credential file so you do not need to pass a -token flag on every command. This chapter explains how credentials are stored, how to add and remove them, and how one-time pairing codes let administrators onboard users and agents without distributing 64-character hex tokens by hand.

The credential store

The credential store is a YAML file at:

- Linux / macOS:

~/.tela/credentials.yaml - Windows:

%APPDATA%\tela\credentials.yaml

It is written with 0600 permissions (owner read/write only). It maps hub URLs to tokens. When tela or telad needs a token for a hub and none is provided on the command line, it looks here first.

telad running as an OS service uses a system-level credential store instead:

- Linux:

/etc/tela/credentials.yaml - Windows:

%ProgramData%\Tela\credentials.yaml

Writing to the system store requires administrator or root privileges.

Storing credentials

tela login wss://hub.example.com

# Token: (paste token, press Enter)

# Identity (press Enter to skip): alice

tela login prompts for a token and an optional identity label, then stores both in the user credential store. Once stored, any tela command targeting that hub finds the token automatically.

For telad running as a service:

sudo telad login -hub wss://hub.example.com

# Token: (paste token, press Enter)

telad login requires elevated privileges because it writes to the system credential store.

Removing credentials

tela logout wss://hub.example.com

sudo telad logout -hub wss://hub.example.com

Pinning a hub's TLS certificate

Tokens prove that the bearer is allowed to talk to a hub. They do not prove that the connection is actually reaching that hub and not a rogue proxy in the middle. Tela addresses that with TLS certificate pinning: a per-hub fingerprint stored alongside the token, checked on every tela connect, refused on mismatch.

The fingerprint is the SHA-256 hash of the hub's TLS leaf certificate's Subject Public Key Info (SPKI), formatted as sha256:<lowercase hex>. Pinning the SPKI rather than the whole certificate means certificate renewal with the same key does not break the pin. Key rotation does break the pin, which is the point: a key rotation is exactly the case where the operator should re-confirm the change.

First connect (TOFU)

The first time tela connect succeeds against a hub with no pin configured, it logs the captured fingerprint and the exact command to enforce it next time:

hub wss://hub.example.com presented certificate sha256:1a2b...3456 (no pin configured;

run 'tela pin wss://hub.example.com sha256:1a2b...3456' to enforce it)

If you want the connection refused on any future certificate change, copy the fingerprint and pin it:

tela pin wss://hub.example.com sha256:1a2b...3456

Inspect, change, or remove a pin

tela pin wss://hub.example.com # show the current pin

tela pin wss://hub.example.com sha256:newfingerprint # update (use after a deliberate key rotation)

tela pin wss://hub.example.com -clear # remove (return to plain CA-chain trust)

Pinning at credential-creation time

tela login wss://hub.example.com -pin sha256:1a2b...3456

Useful when the operator gives you both the token and the expected fingerprint up front (out-of-band, e.g. a chat message or a printed handout for an air-gapped onboarding).

What a pin mismatch looks like

$ tela connect -hub wss://hub.example.com -machine prod-db

websocket dial failed: ... certpin: presented certificate does not match

pinned fingerprint: got sha256:9999..., want sha256:1a2b...

The connection is refused before any token, machine name, or other secret is sent. If this happens unexpectedly, do not update the pin until you have confirmed the change with the hub operator out-of-band.

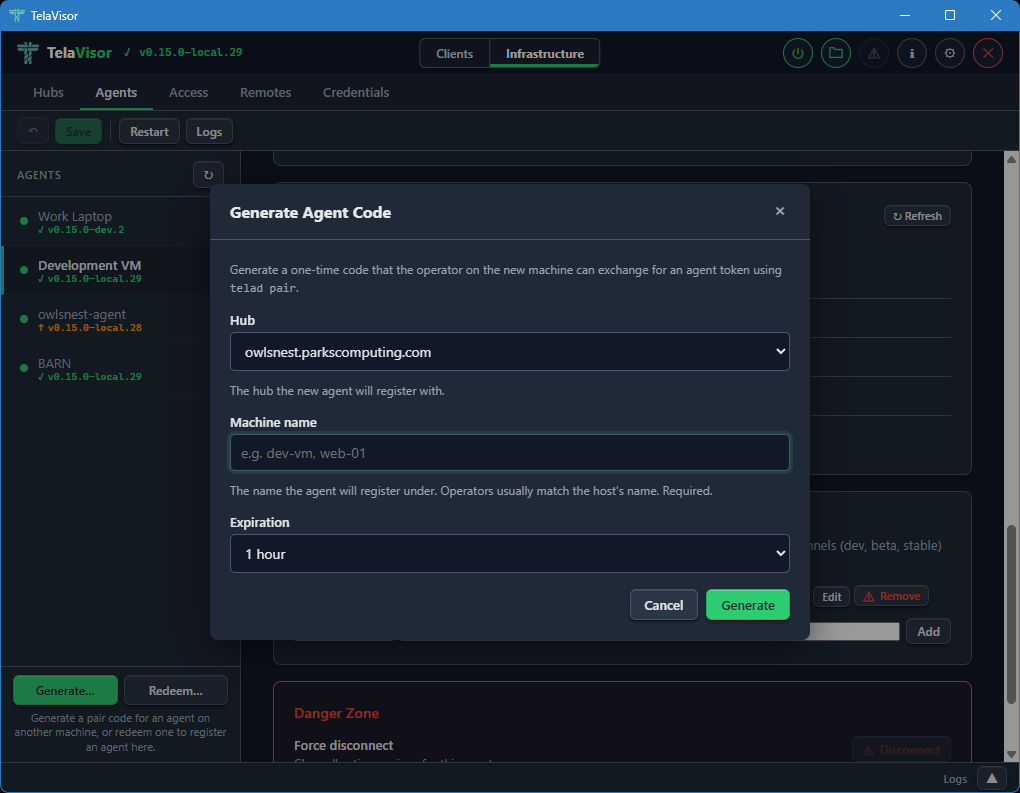

Pairing codes

A pairing code is a short, single-use code that a hub administrator generates for a user or agent. The recipient redeems it for a permanent token without ever seeing or handling the raw token value. Codes expire between 10 minutes and 7 days after generation.

Generating a code (administrator)

# Generate a connect code for a user (grants connect access to machine barn)

tela admin pair-code barn -hub wss://hub.example.com -token <owner-token>

# Generate a connect code that expires in 24 hours

tela admin pair-code barn -hub wss://hub.example.com -token <owner-token> -expires 24h

# Generate a register code for a new agent

tela admin pair-code barn -hub wss://hub.example.com -token <owner-token> -type register

# Generate a code granting access to all machines

tela admin pair-code barn -hub wss://hub.example.com -token <owner-token> -machines '*'

The command prints the code and the corresponding redemption command to give to the recipient:

Generated pairing code: ABCD-1234

Expires: 2026-04-15T10:30:00Z

Client pairing command:

tela pair -hub wss://hub.example.com -code ABCD-1234

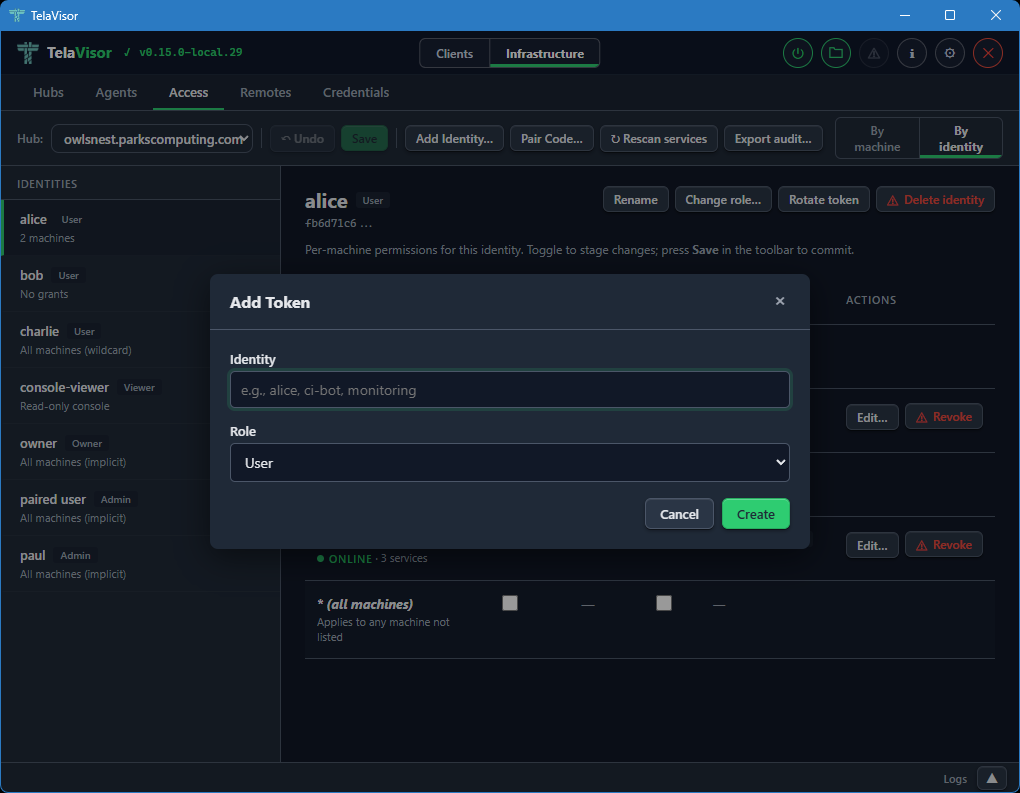

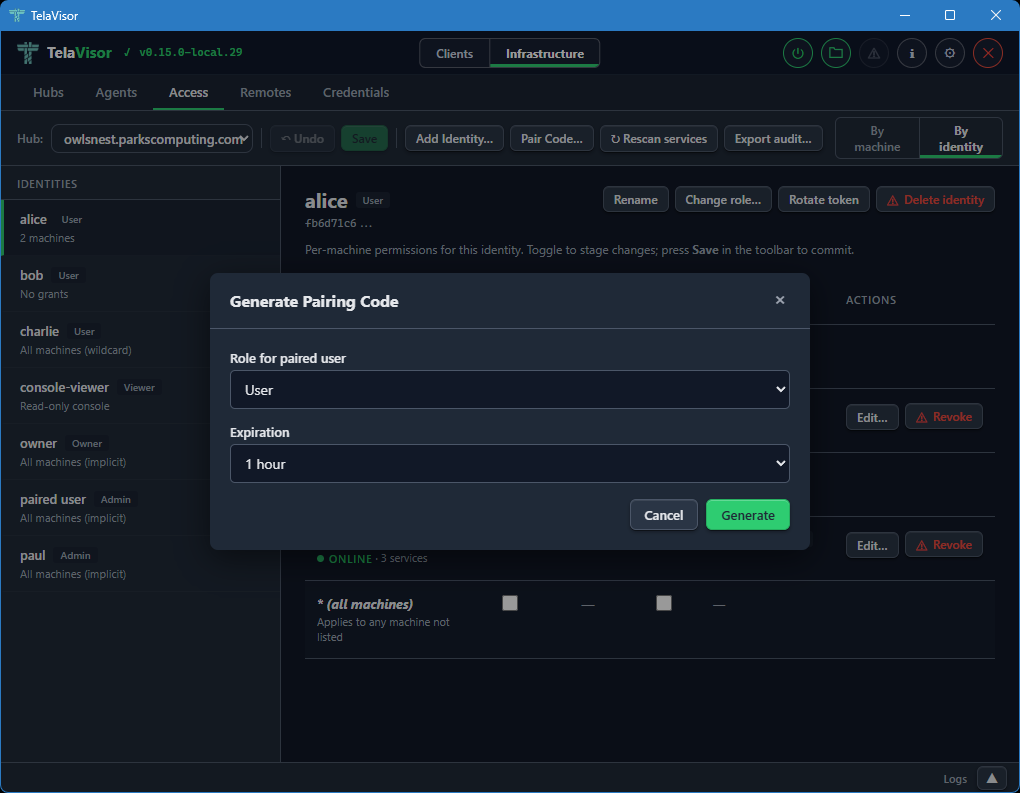

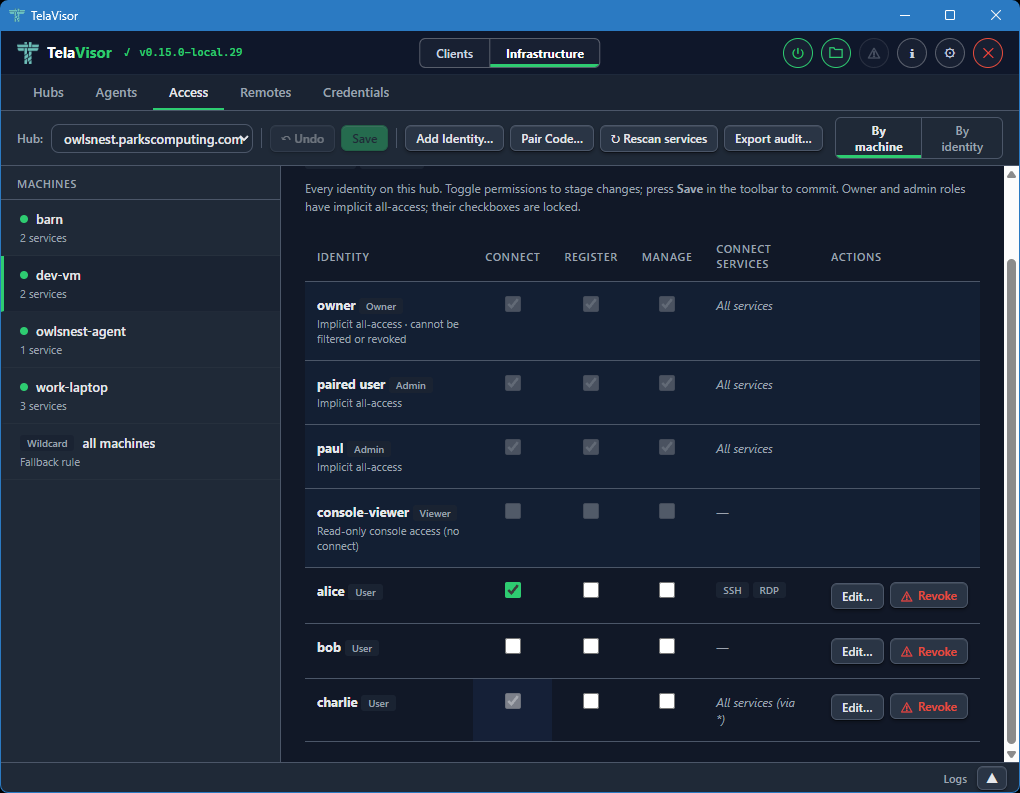

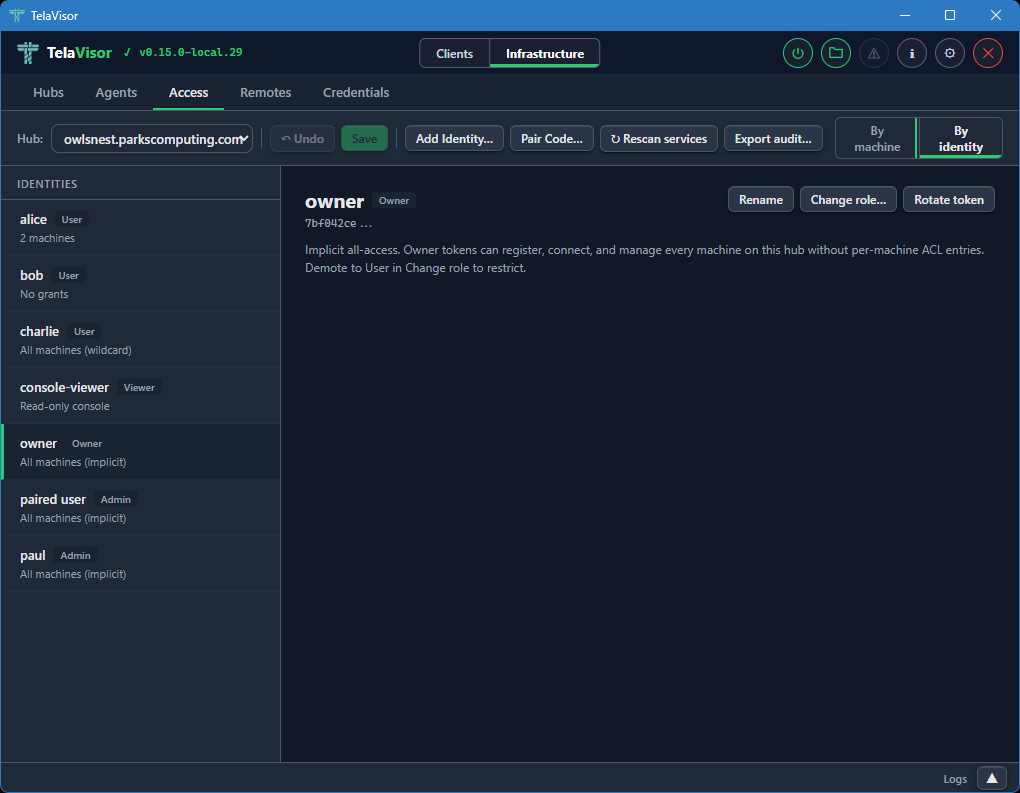

Codes can also be generated from TelaVisor's Access tab, using the Pair Code... button in the toolbar, for administrators who prefer a graphical interface.

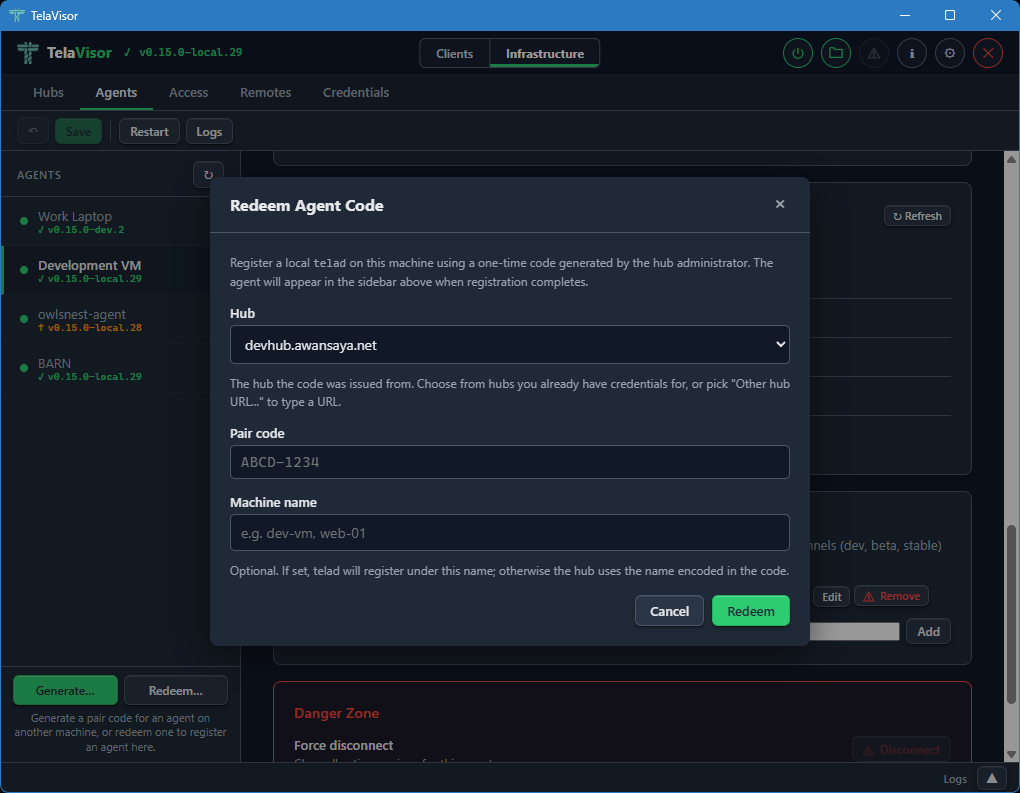

Redeeming a code (user)

tela pair -hub wss://hub.example.com -code ABCD-1234

tela pair contacts the hub, exchanges the code for a permanent token, and stores the token in the user credential store. The code is consumed on redemption and cannot be used again.

After pairing, the user can connect without a -token flag:

tela connect -hub wss://hub.example.com -machine barn

Redeeming a code (agent)

sudo telad pair -hub wss://hub.example.com -code ABCD-1234

telad pair stores the resulting token in the system credential store. The agent then connects to the hub without a token in its config file.

For administrators who prefer a graphical interface and run telad on the same machine as TelaVisor (developer workstations, single-host setups), the Agents tab's Redeem... button opens a dialog that runs the same telad pair invocation under the hood. The button only handles the local case; agents on remote hosts must redeem on the host itself, since the resulting token has to land in that host's credential store.

Connection profiles

A connection profile is a YAML file that describes one or more tunnels. Running tela connect -profile <name> opens all of them in parallel with a single command, each reconnecting independently on failure.

Profiles live in ~/.tela/profiles/ (or %APPDATA%\tela\profiles\ on Windows). Each file is named <profile-name>.yaml.

A minimal profile

# ~/.tela/profiles/work.yaml

connections:

- hub: wss://hub.example.com

machine: dev-server

services:

- remote: 22

tela connect -profile work

This opens a tunnel to port 22 on dev-server and binds it to a deterministic loopback address. Use tela status to see the bound address.

Multiple connections

A profile can open tunnels to any number of machines across any number of hubs simultaneously:

connections:

- hub: wss://hub.example.com

machine: dev-server

services:

- remote: 22

- remote: 5432

- hub: wss://hub.example.com

machine: build-server

services:

- remote: 22

- hub: wss://other-hub.example.com

machine: staging-db

services:

- name: postgres

Each connection gets its own deterministic loopback address. Services on different machines never share a local port.

Tokens and credentials

If a token is stored in the credential store for a hub (via tela login or tela pair), the profile does not need to include it. The token is looked up automatically.

To embed a token explicitly:

connections:

- hub: wss://hub.example.com

machine: barn

token: ${MY_HUB_TOKEN}

Profile YAML supports environment variable expansion with ${VAR} syntax. This is useful for tokens in CI/CD environments where you do not want credentials in files on disk.

Specifying services

Services can be identified by port number, by name, or with a local port override:

services:

# By port number -- connects remote port 22 to local port 22

- remote: 22

# By port number with a local override -- useful when 22 is taken locally

- remote: 22

local: 2222

# By service name -- resolves the port from the hub's service registry

- name: postgres

# By service name with a local port override

- name: rdp

local: 13389

When you specify name: gateway the gateway service is resolved the same way as any other named service.

Pinning a loopback address

By default each machine gets a deterministic loopback address derived from the hub URL and machine name. To fix a specific address instead:

connections:

- hub: wss://hub.example.com

machine: barn

address: 127.99.1.1

services:

- remote: 22

The address must be in the 127.0.0.0/8 range.

Auto-mounting file shares

If machines in the profile have file sharing enabled, you can configure the profile to mount them as a local drive automatically when the profile connects:

connections:

- hub: wss://hub.example.com

machine: barn

services:

- remote: 22

mount:

mount: T: # drive letter on Windows, or a directory path on macOS/Linux

auto: true # mount automatically when the profile starts

port: 18080 # WebDAV listen port (default 18080)

DNS name resolution

The dns block configures the loopback prefix used by tela dns hosts for this profile:

connections:

- hub: wss://hub.example.com

machine: barn

dns:

loopback_prefix: "127.88" # prefix for 'tela dns hosts' entries; does not affect port binding

See the DNS names section below for how to add machine names to your hosts file.

Managing profiles

tela profile list # list all profiles

tela profile show work # print the contents of work.yaml

tela profile create staging # create a new empty profile at ~/.tela/profiles/staging.yaml

tela profile delete old-work # delete a profile

tela profile create writes a starter file with example comments. Edit it with any text editor.

DNS names

tela dns hosts generates /etc/hosts entries for all machines in a profile, using their deterministic loopback addresses:

tela dns hosts

# Tela local names -- generated by 'tela dns hosts'

# 127.88.12.34 barn.tela

# 127.88.56.78 dev-server.tela

Append the output to your hosts file to enable name-based access:

# Linux / macOS

tela dns hosts >> /etc/hosts

# Windows (run as Administrator)

tela dns hosts >> C:\Windows\System32\drivers\etc\hosts

The default suffix is .tela. Override it:

tela dns hosts -suffix local # barn.local

tela dns hosts -suffix "" # bare names: barn, dev-server

tela dns hosts -profile staging # use a specific profile

After adding the entries, connect to a machine by name:

ssh user@barn.tela

psql -h dev-server.tela -U postgres

Running a profile as an OS service

A profile can run as a persistent OS service that reconnects automatically after reboots and connection drops:

tela service install -config ~/.tela/profiles/work.yaml

tela service start

See Run Tela as an OS service for the full setup.

Hub directories and portals

A single hub is enough for one team in one place. Real organizations end up with several: per environment, per customer, per region, per acquisition. The fabric handles this with a small directory protocol that lets a client resolve hub names instead of memorizing URLs, and an optional portal layer that adds dashboards and visibility on top of the directory.

The directory protocol

Tela ships a hub directory protocol as part of the fabric, not as a separate product. Two endpoints define it:

/.well-known/telais the discovery endpoint, following Request for Comments (RFC) 8615 (well-known Uniform Resource Identifiers). A client fetches it to discover where the directory's other endpoints live and what authentication they expect./api/hubsis the directory itself: a list of hubs registered with this directory, each with a name, a public Uniform Resource Locator (URL), and optional metadata.

That is the whole protocol. Anything that responds correctly on those two endpoints is a hub directory, regardless of what else it does.

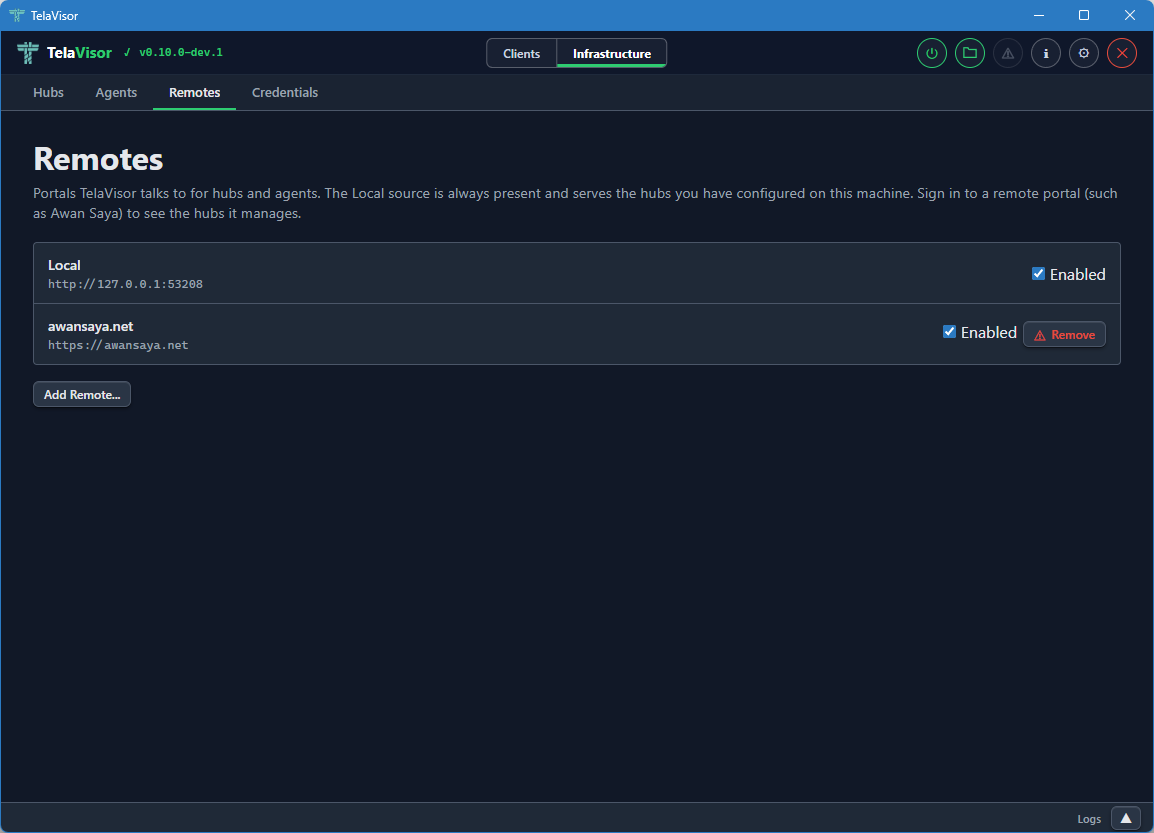

Adding a directory as a remote

On the client side, a hub directory is added as a remote:

tela remote add work https://directory.example.com

Once a remote is registered, the client resolves short hub names through

it before falling back to the local hubs.yaml file:

tela machines -hub myhub # short name resolved via remote

tela connect -hub myhub -machine prod-web01

The client does not change otherwise. The same tela connect command works

whether the user typed a full URL or a name that resolved through a

directory. A user's CLI can register more than one remote: a self-hosted

directory for internal hubs, a managed directory for cross-organization or

customer hubs, the same tela binary talking to both.

Listing a hub in a directory

On the hub side, a hub registers itself with a directory through the

telahubd portal subcommand:

telahubd portal add work https://directory.example.com

telahubd portal list

telahubd portal remove work

The portal add command discovers the directory's endpoints via

/.well-known/tela, registers the hub through the directory's API, and

stores the association in the hub's configuration. From that point on, any

client whose remote points at the same directory can find the hub by

name.

What a portal adds on top

The directory protocol is the floor. A portal is a directory plus whatever extras the operator wants to layer on. Typical additions:

- A multi-hub dashboard. Status, agents, sessions, and history aggregated across every hub the user has access to, in one browser tab.

- Identity beyond the hub. Personal application programming interface (API) tokens issued by the portal, often tied to an external identity provider, that the client uses to authenticate against the portal itself rather than against each individual hub.

- Multi-organization access control. Users belong to organizations, organizations have teams, teams own hubs and agents. The portal becomes the place where membership and permissions live.

- Web-based hub and agent administration parallel to TelaVisor's Infrastructure mode but accessible from any browser.

- Channel selectors for hub and agent self-update, the same controls exposed in TelaVisor.

- Activity logging and audit trails that span multiple hubs.

A portal does not weaken the underlying hubs. Each hub still authenticates and authorizes connections on its own, with its own tokens and its own access control list. The portal handles discovery, identity, and visibility, not trust delegation.

Two operating models

Two paths to a working directory. Same protocol, different operating models.

| Self-hosted directory | Managed directory |

|---|---|

You implement /.well-known/tela and /api/hubs, or run an existing portal you control | A vendor runs the directory and the dashboard for you |

| Everything stays on your own infrastructure | Multi-hub visibility, personal API tokens, web console without operating the server |

| Suitable when compliance or sovereignty rule out a hosted option | Suitable when fleet visibility and onboarding speed matter more than self-hosting |

| Tela ships the protocol; you ship the server | Awan Saya is one such managed option, available on request |

The CLI does not care which one a remote points at. The same

tela remote add command and the same name-resolution path work for both.

When you need a directory at all

If you are running a single hub for personal use, you do not need a directory or a portal. The hub stands alone, the client connects to it by URL, and the rest of this book applies as written. The directory layer becomes useful when:

- You have more than one hub and users start asking which one to connect to.

- You are providing remote access as a service across multiple customers.

- You want fleet-wide visibility from one screen instead of clicking through each hub's console in turn.

- You want to manage onboarding centrally instead of distributing tokens out of band for every hub.

If none of those apply yet, skip this chapter and come back when one of them does.

The path gateway

The path gateway is a built-in HTTP reverse proxy inside telad. It exposes one tunnel port and routes incoming HTTP requests to different local services based on URL path prefix, eliminating the need for a separate nginx, Caddy, or Traefik instance for tunnel-internal routing.

When to use a gateway

Use a gateway when you have several HTTP services on one machine and want to reach all of them through a single tunnel port. Common examples:

- A web frontend, a REST API, and a metrics endpoint running on the same host

- A multi-page web app with backend services on different ports

- A development stack you want accessible through one URL

You do not need a gateway when you have only one HTTP service (just expose it as a normal service), when your services use TCP rather than HTTP (expose them as normal TCP services), or when you already use a reverse proxy in front of your services and want to keep it as the edge.

How it works

Without a gateway, a client connecting to a multi-service application gets one binding per service port:

localhost:3000 → port 3000

localhost:4000 → port 4000

localhost:4100 → port 4100

The browser opens http://localhost:3000 and calls the API on a different origin (localhost:4000). Same host, different port -- that is still a cross-origin request under browser CORS rules, which means either CORS headers on the API server, a hardcoded API URL in the UI code, or an extra proxy layer somewhere.

With a gateway, the client gets one binding:

localhost:8080 → HTTP

The browser opens http://localhost:8080/. The UI calls /api/users. The gateway sees the /api/ prefix and proxies the request to the local API service. Same origin. No CORS. No extra configuration.

Configuration

Gateway configuration lives in telad.yaml under each machine, alongside the services: list:

hub: wss://your-hub.example.com

token: "<your-agent-token>"

machines:

- name: barn

services:

- port: 5432

name: postgres

proto: tcp

gateway:

port: 8080

routes:

- path: /api/

target: 4000

- path: /metrics/

target: 4100

- path: /

target: 3000

This declares one direct TCP service (PostgreSQL on port 5432, exposed through the tunnel as usual) and a gateway listening on port 8080 with three routes. The HTTP services on ports 3000, 4000, and 4100 are not in the services: list -- they are private to the machine and reachable only through the gateway. The tunnel exposes port 8080 and port 5432.

Field reference

| Field | Required | Description |

|---|---|---|

gateway.port | Yes | Port the gateway listens on inside the WireGuard tunnel. Does not need to match any local service port. |

gateway.routes | Yes | List of routes, each mapping a URL path prefix to a local target port. |

routes[].path | Yes | URL path prefix to match (e.g. /api/, /admin/, /). |

routes[].target | Yes | Local TCP port to proxy matched requests to. |

Route matching

Routes are matched by longest path prefix first. The order in the YAML file does not matter; telad sorts them at startup. A route with path: / matches any request not claimed by a more specific route.

With these routes:

routes:

- path: /

target: 3000

- path: /api/v2/

target: 4002

- path: /api/

target: 4000

A request to /api/v2/users matches /api/v2/ (target 4002). A request to /api/health matches /api/ (target 4000). A request to /about matches / (target 3000).

Connecting through a gateway

The gateway appears to clients as a service named gateway. Use it in a connection profile like any other service:

# ~/.tela/profiles/barn.yaml

connections:

- hub: wss://your-hub.example.com

machine: barn

services:

- name: gateway

- name: postgres

tela connect -profile barn

Output:

Services available:

localhost:8080 → HTTP

localhost:5432 → port 5432

Port labels come from the well-known port table (22=SSH, 80/8080=HTTP, 3389=RDP, etc.). Ports not in the table show as port N.

If port 8080 conflicts with something local, override it:

services:

- name: gateway

local: 18080

Direct access alongside the gateway

You can expose a service both through the gateway (for browser access) and as a direct service (for tools like curl or Postman). Add it to the agent's services: list as well as the gateway routes, then include it in the profile:

# telad.yaml

machines:

- name: barn

services:

- port: 4000

name: api

proto: http

gateway:

port: 8080

routes:

- path: /api/

target: 4000

- path: /

target: 3000

# profile

connections:

- hub: wss://your-hub.example.com

machine: barn

services:

- name: gateway

- name: api

local: 14000

Now http://localhost:8080/api/... reaches the API through the gateway, and http://localhost:14000/... reaches it directly.

Cross-environment use

When you maintain the same application across several environments, each running its own telad, a profile can connect to multiple gateways simultaneously:

connections:

- hub: wss://prod-hub.example.com

machine: app

services:

- name: gateway

- hub: wss://staging-hub.example.com

machine: app

services:

- name: gateway

local: 18080

When connecting to both environments simultaneously, use local: overrides to put them on different ports. Without an override, both gateways would try to bind localhost:8080 and the second would fall back to localhost:18080. Making it explicit avoids relying on fallback behavior. The routing logic stays in each environment's telad.yaml, not in the client profile.

Limitations

The gateway does not terminate TLS (the WireGuard tunnel already provides end-to-end encryption). It does not authenticate users (that is the hub's token and ACL layer). It does not load-balance across instances. It does not proxy WebSocket connections -- if you need WebSocket access to a service, expose it as a separate service alongside the gateway. It is not a replacement for an internet-facing reverse proxy with TLS termination, rate limiting, or WAF rules.

For the design rationale and the relationship between the path gateway and the other gateway primitives in Tela, see Gateways in the Design Rationale section. For a step-by-step setup walkthrough and troubleshooting, see Set up a path-based gateway.

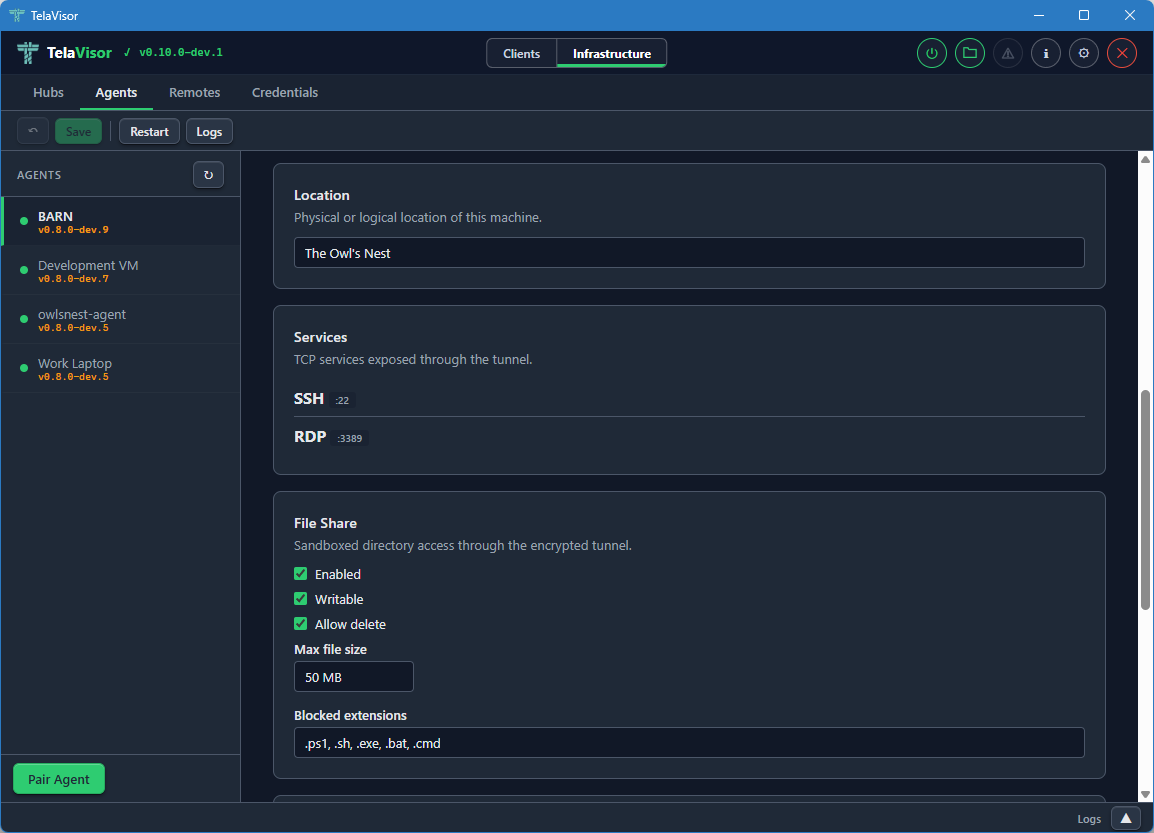

File sharing

Tela file sharing lets authorized clients browse, download, upload, rename, move, and delete files on a remote machine through the same encrypted WireGuard tunnel that carries TCP service traffic. No SSH, no SFTP, and no separate credentials are required beyond a Tela token with connect permission on the machine.

File sharing is off by default and must be explicitly enabled per machine by the agent operator.

Enabling file sharing

Add a shares list to a machine in telad.yaml. Each entry defines one shared directory with its own name and access controls.

machines:

- name: barn

ports: [22, 3389]

shares:

- name: files

path: /home/shared

telad creates each share directory on startup if it does not exist. Each path must be absolute. telad refuses to start if any share path is a system directory (/, /etc, C:\Windows, and similar).

If you are upgrading from an older configuration that used fileShare: (singular), that key is still accepted and is synthesized as a share named legacy. It will be removed at 1.0. Migrate to the shares list.

Configuration reference

| Field | Type | Default | Description |

|---|---|---|---|

name | string | required | Share name. Used in WebDAV paths (/machine/share/path) and the -share NAME flag on tela files commands. |

path | string | required | Absolute path to the shared directory. |

writable | bool | false | Allows clients to upload files and create directories. When false, only list and download are available. |

allowDelete | bool | false | Allows clients to delete files and empty directories. Requires writable: true. |

maxFileSize | string | 50MB | Maximum size of a single uploaded file. Accepts KB, MB, and GB suffixes. |

maxTotalSize | string | none | Maximum total size of all files in the shared directory. Uploads that would exceed this limit are rejected. |

allowedExtensions | []string | [] | Whitelist of file extensions. Empty means all extensions are allowed, subject to blockedExtensions. |

blockedExtensions | []string | see below | Blacklist of file extensions. By default blocks .exe, .bat, .cmd, .ps1, and .sh. Applied after allowedExtensions. |

A read-only log share

shares:

- name: logs

path: /var/log/app

writable: false

A writable staging area

shares:

- name: staging

path: /opt/staging

writable: true

allowDelete: true

maxFileSize: 200MB

maxTotalSize: 2GB

allowedExtensions: [".zip", ".tar.gz", ".yaml"]

Multiple shares on one machine

shares:

- name: logs

path: /var/log/app

writable: false

- name: uploads

path: /opt/uploads

writable: true

allowDelete: true

maxFileSize: 50MB

Access from the CLI

The tela files subcommand provides operations on connected machines. An active tunnel must be established with tela connect first.

# List files in a share

tela files ls -machine barn -share files

tela files ls -machine barn -share files subdir/

# Download a file

tela files get -machine barn -share files report.pdf

tela files get -machine barn -share files report.pdf -o /local/report.pdf

# Upload a file (requires writable: true)

tela files put -machine barn -share files localfile.txt

tela files put -machine barn -share files localfile.txt remote-name.txt

# Delete a file (requires allowDelete: true)

tela files rm -machine barn -share files old-log.txt

# Create a directory (requires writable: true)

tela files mkdir -machine barn -share files archive/2026

# Rename a file or directory (requires writable: true)

tela files rename -machine barn -share files old-name.txt new-name.txt

# Move a file or directory (requires writable: true)

tela files mv -machine barn -share files logs/jan.txt archive/2026/jan.txt

# Show file sharing status for a machine (lists all shares)

tela files info -machine barn

Mounting as a local drive

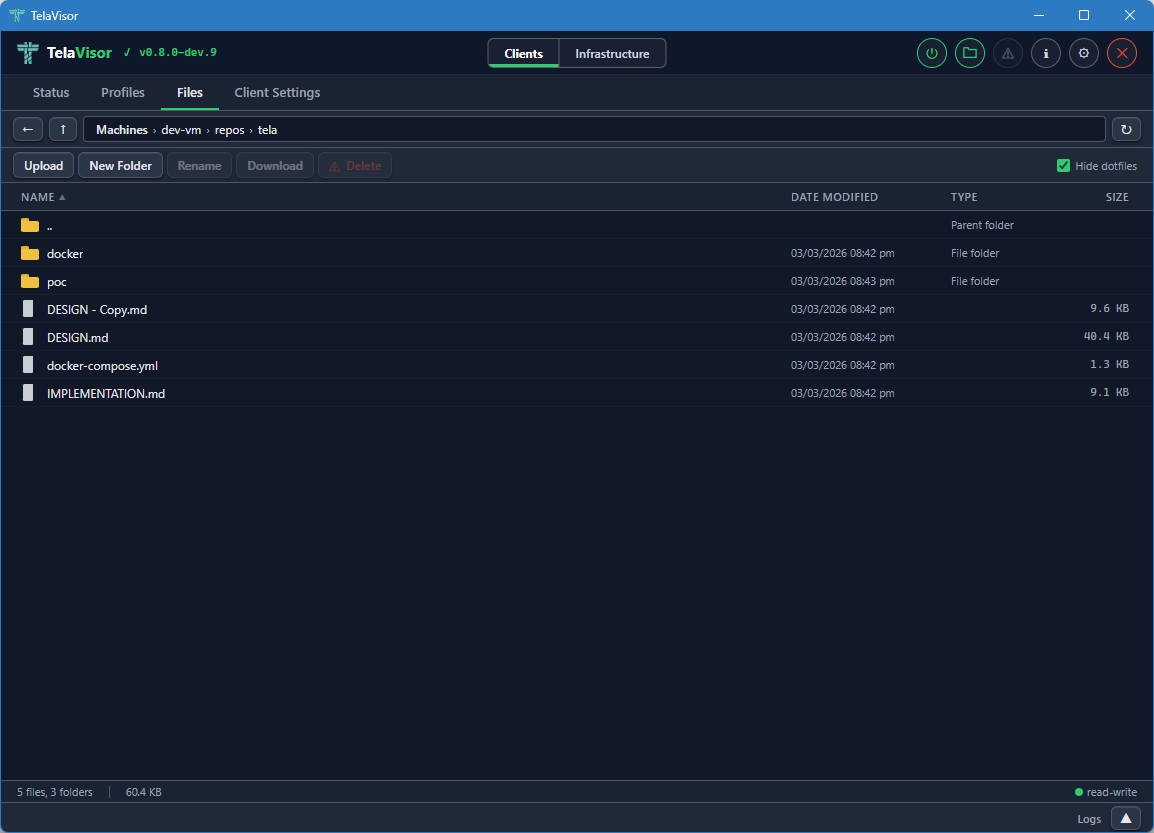

tela mount starts a WebDAV server that exposes Tela file shares as a local drive. Each connected machine with file sharing enabled appears as a top-level folder, with each share as a subfolder inside it (/machine/share/path).

# Windows: mount as drive letter T:

tela mount -mount T:

# macOS/Linux: mount to a directory

tela mount -mount ~/tela

No kernel drivers or third-party software are required. On Windows this uses the built-in WebDAV client (WebClient service). On macOS and Linux it uses the OS WebDAV mount support.

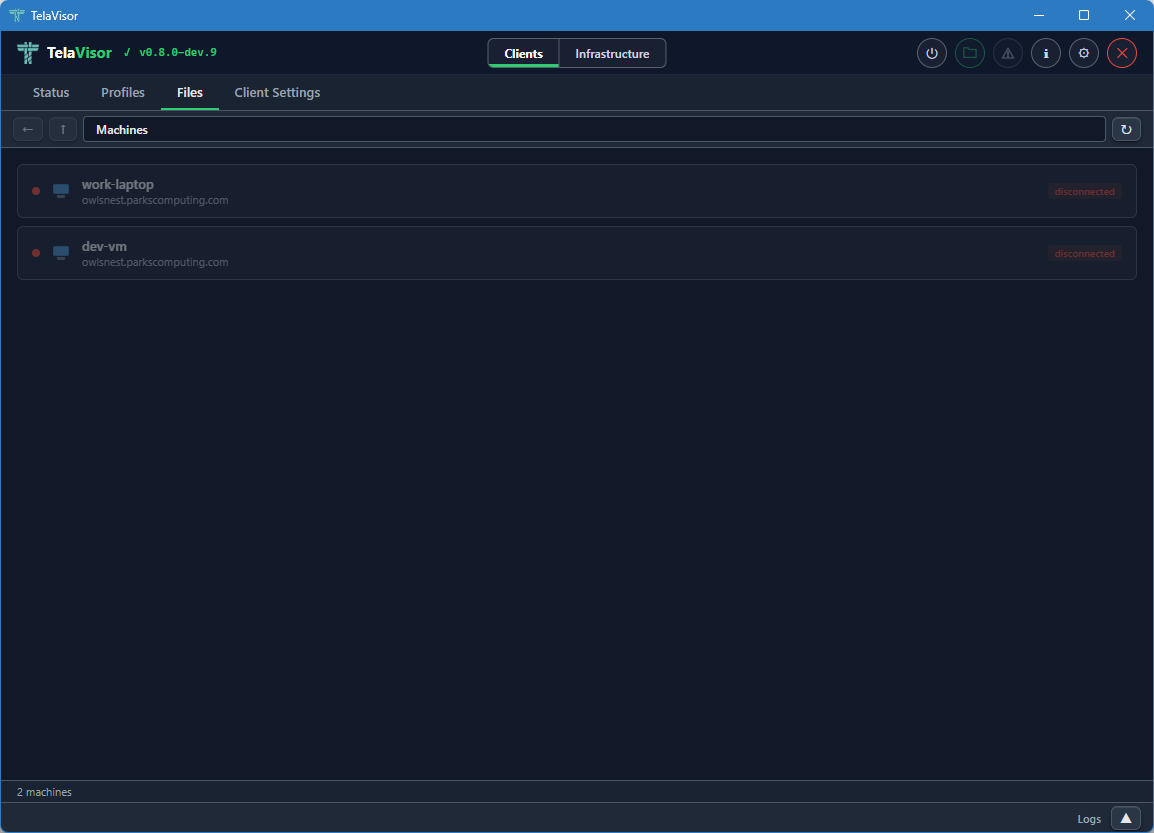

Access from TelaVisor

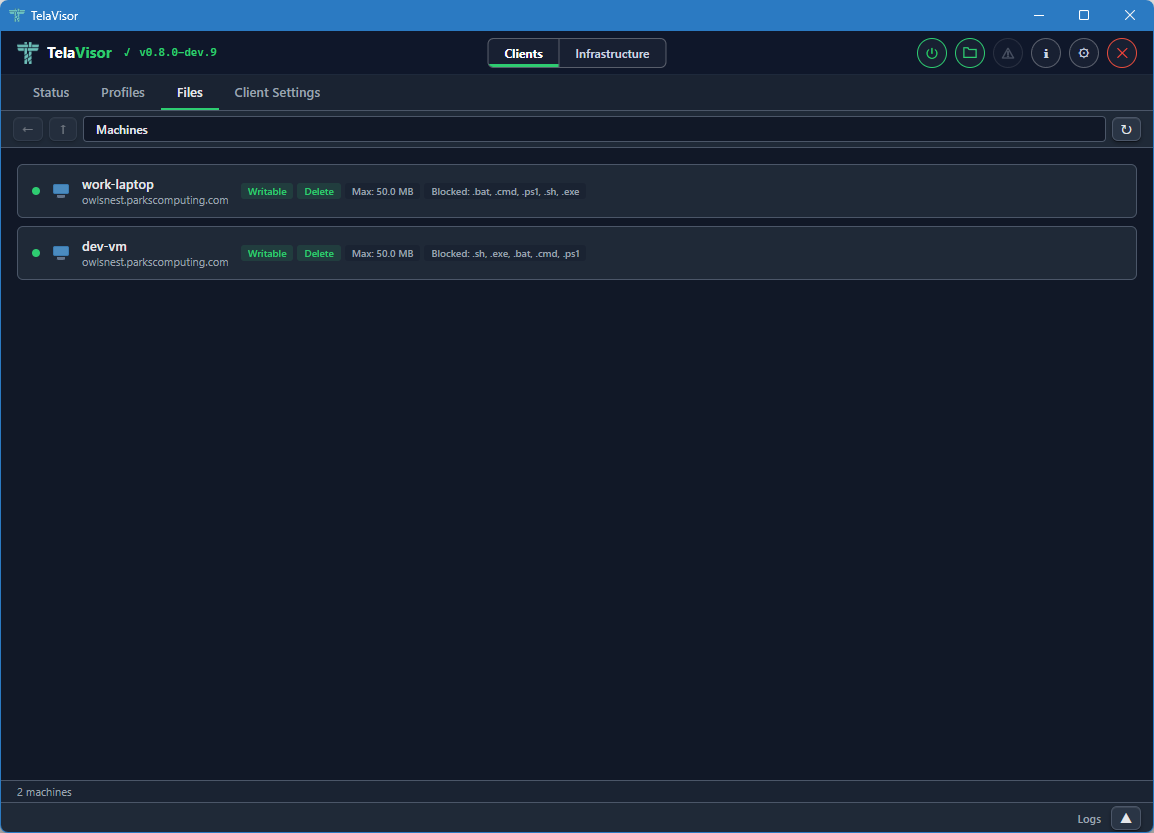

The Files tab in TelaVisor provides a graphical file browser for machines with file sharing enabled. It shows file name, size, and modification time. You can download files via the system file dialog, upload files (when writable: true), delete files (when allowDelete: true), navigate subdirectories with breadcrumb navigation, and drag and drop files to upload.

The machine list in the Connections view shows a file-sharing indicator when a machine advertises the capability, distinguishing between read-only and read-write configurations.

Security

File sharing uses the existing connect permission. A token that can connect to a machine can use file sharing on that machine. No separate permission is required.

All file operations are sandboxed to the declared directory. Path traversal is rejected at the protocol level: the server validates every client-supplied path using filepath.Rel to confirm it cannot escape the sandbox, and uses os.Lstat to reject symlinks. No file operation is delegated to OS-level permissions alone.

The shared directory is never accessible without an active authenticated Tela session. File contents travel inside the WireGuard tunnel as ciphertext. The hub sees nothing different from any other tunnel traffic.

For the design rationale behind these choices, see File sharing in the Design Rationale section.

Upstreams

An upstream is a TCP forwarding rule inside telad that intercepts a local service's outbound dependency calls and routes them to a configurable target. A service calls localhost:5432 expecting to reach its database; telad listens on that port and forwards the connection to wherever the database actually is.

Upstreams start when telad starts and run independently of any tunnel session. They provide a dispatch layer that you can change by editing a YAML file, without touching application code, containers, or environment variables.

Configuration

Upstreams are declared per machine in telad.yaml:

machines:

- name: barn

ports: [8080]

upstreams:

- port: 5432

target: db.internal:5432

name: postgres

- port: 6379

target: cache.internal:6379

name: redis

telad binds port 5432 and port 6379 on all interfaces immediately on startup. Any process on the machine that connects to those ports (including via localhost) gets forwarded to the respective targets.

Field reference

| Field | Required | Description |

|---|---|---|

port | Yes | Local port to listen on. telad binds 0.0.0.0:<port>. |

target | Yes | Address to forward connections to, in host:port form. |

name | No | Human-readable label used in log output. |

What upstreams are for

The typical use case is service-to-service dependency routing in development and staging environments.

A web service configured to connect to localhost:5432 works against a local database in development. In staging, the database is on a separate machine at db.staging.internal:5432. Without upstreams, changing environments means changing the application's configuration, rebuilding a container, or updating environment variables.

With an upstream, the application configuration stays the same in every environment. You change the target in telad.yaml and restart telad. The application never knows the database moved.

# telad.yaml on the staging machine

upstreams:

- port: 5432

target: db.staging.internal:5432

name: postgres

The application calls localhost:5432. telad forwards to db.staging.internal:5432. No application change required.

Upstreams through a Tela tunnel

The upstream target field accepts any reachable host:port, including the deterministic loopback addresses that tela connect assigns to remote machines. When a machine runs both telad (as an agent registering its own services) and tela (as a client connected to a remote machine), an upstream can bridge the two.

For example:

- Machine A runs

teladand exposes a service on port 8080. - Machine B runs

tela connectto machine A. The service on machine A becomes reachable on machine B atlocalhost:PORT-- for example,localhost:8080if that port is free, orlocalhost:18080if it is taken. Usetela statuson machine B to find the exact port. - Machine B also runs

teladwith an upstream:port: 8080, target: localhost:8080(substitute the actual bound port). - Any application on machine B that calls

localhost:8080reaches the service on machine A through the tunnel.

This is an advanced pattern. For most cases, direct service exposure through the tunnel is simpler.

Upstreams are not gateways

Upstreams and the path gateway are both forwarding primitives in telad, but they operate differently:

- The upstream intercepts outbound calls from services running on the agent machine and routes them to a dependency. It is invisible to the services using it.

- The path gateway accepts inbound HTTP connections through the WireGuard tunnel and routes them to local services by URL path. It is visible to connecting clients as a named service.

Use an upstream when a service needs to reach a dependency at a different address than it expects. Use a gateway when clients connecting through Tela need to reach multiple HTTP services through one tunnel port.

Hub administration

The tela admin subcommand manages a hub's tokens, access permissions, agent lifecycle, and portal registrations from the command line. All operations require a token with owner or admin role. Changes take effect immediately and persist to the hub's configuration file. No hub restart is needed.

Authentication

Every tela admin command requires a hub URL and an owner or admin token. User-role tokens are rejected. The owner token is printed once when you run telahubd user bootstrap and is never displayed again.

tela admin tokens list -hub wss://hub.example.com -token <owner-token>

If the token is omitted, tela resolves it in this order:

-tokenflagTELA_OWNER_TOKENenvironment variableTELA_TOKENenvironment variable- Credential store -- the token stored by

tela loginfor the hub URL

In practice, you log in once and omit the token flag on every subsequent command:

tela login wss://hub.example.com

# Token: (paste owner token, press Enter)

tela admin tokens list -hub wss://hub.example.com

The -hub flag accepts a short name if you have configured remotes, but the full URL is always accepted.

Concepts

A hub's authorization state has two parts: identities (tokens) and permissions.

An identity is a named token. It has a role: owner, admin, or user (the default). Owner and admin tokens bypass all machine permission checks. User tokens are subject to per-machine access control. A viewer role exists but is reserved for the hub's auto-generated console token; it cannot be assigned when creating tokens.

Machine permissions determine what a user-role token can do on a specific machine: connect, register, and manage. These are stored as entries in the access control list. A wildcard machine ID of * applies the permission to all machines.

The tokens resource manages identities. The access resource manages the permissions attached to those identities. The rotate command replaces the secret value of a token without changing its identity or permissions.

For the formal definition of roles and permissions, see Appendix C: Access model.

Tokens

# List all identities

tela admin tokens list -hub wss://hub.example.com

# Add a new identity (default role: user)

tela admin tokens add <id> -hub wss://hub.example.com

# Add with elevated role

tela admin tokens add <id> -hub wss://hub.example.com -role admin

# Remove an identity

tela admin tokens remove <id> -hub wss://hub.example.com

tokens add prints the token value once and never again. Copy it before closing the terminal. If you lose it, use rotate to issue a new one.

tokens remove deletes the identity and all its machine permissions. There is no soft delete or recovery.

The default role for a new identity is user.

Roles

| Role | Description |

|---|---|

owner | Full access to all hub operations, including owner-only actions |

admin | Full access to all hub operations except owner-only actions |

user | Access to machines governed by per-machine permissions |

viewer | Read-only access to machines they have connect permission on |

Access

The access resource provides a unified view of identities and their per-machine permissions.

# List all identities and their permissions

tela admin access -hub wss://hub.example.com

# Grant permissions to an identity on a machine

tela admin access grant <id> <machine> <perms> -hub wss://hub.example.com

# Grant permissions on all machines

tela admin access grant <id> '*' connect -hub wss://hub.example.com

# Revoke all permissions for an identity on a machine

tela admin access revoke <id> <machine> -hub wss://hub.example.com

# Rename an identity

tela admin access rename <id> <new-id> -hub wss://hub.example.com

# Remove an identity and all its permissions

tela admin access remove <id> -hub wss://hub.example.com

Permissions are specified as a comma-separated list. Valid values are connect, register, and manage.

# Grant connect and register on a specific machine

tela admin access grant alice barn connect,register -hub wss://hub.example.com

A * machine ID grants the permission on every machine, including ones registered after the grant is made.

Rotate

rotate generates a new secret value for an existing identity without changing its name, role, or permissions. Use it to revoke a leaked token while keeping the identity intact.

tela admin rotate <id> -hub wss://hub.example.com

The new token value is printed once. The old token stops working immediately.

Pair codes

A pairing code is a short, single-use code that lets you onboard a user or agent without distributing a raw token. The recipient redeems the code to receive a permanent token.

# Generate a connect code for machine barn (default expiry 24h)

tela admin pair-code barn -hub wss://hub.example.com

# Set a custom expiry

tela admin pair-code barn -hub wss://hub.example.com -expires 48h

# Generate a register code for a new agent

tela admin pair-code barn -hub wss://hub.example.com -type register

# Grant access to all machines

tela admin pair-code barn -hub wss://hub.example.com -machines '*'

The output includes the code and the redemption command to give to the recipient:

Generated pairing code: ABCD-1234

Expires: 2026-04-15T10:30:00Z

Client pairing command:

tela pair -hub wss://hub.example.com -code ABCD-1234

Codes expire between 10 minutes and 7 days after generation. The -expires flag accepts Go duration syntax: 10m, 24h, 7d.

For how users and agents redeem codes, see Credentials and pairing.

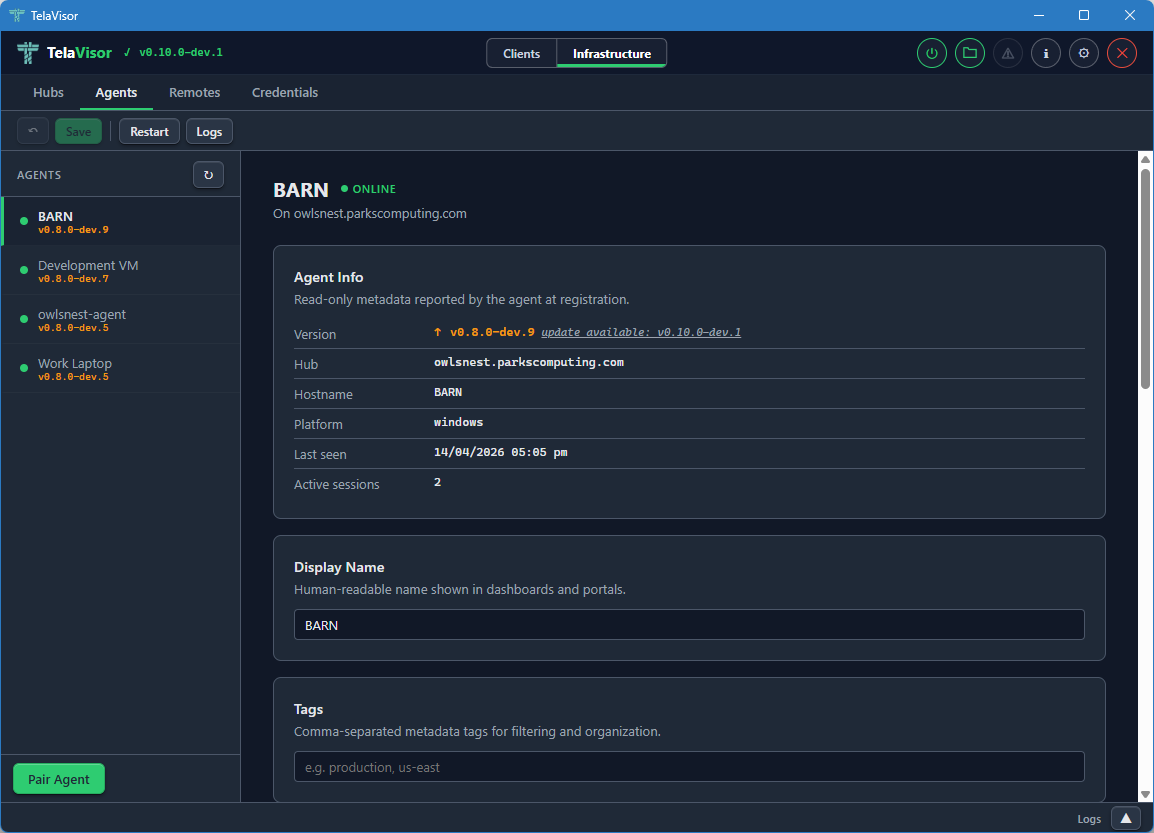

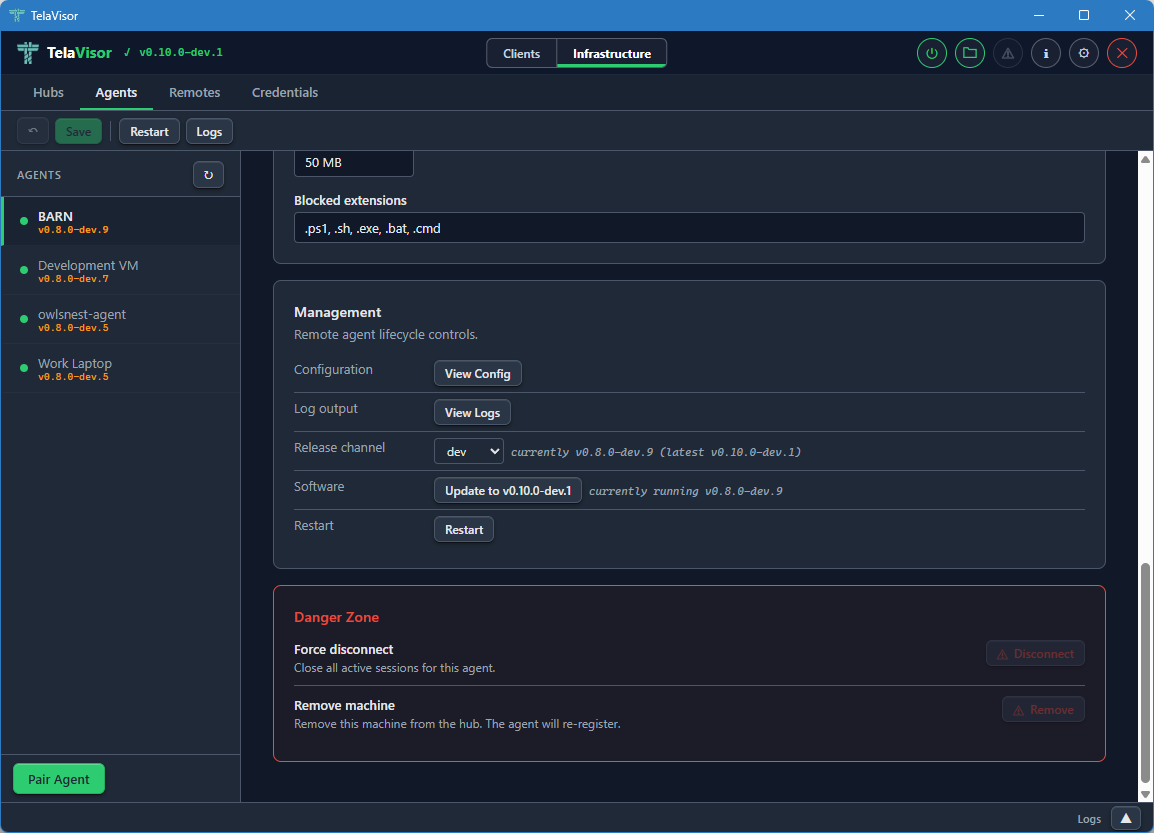

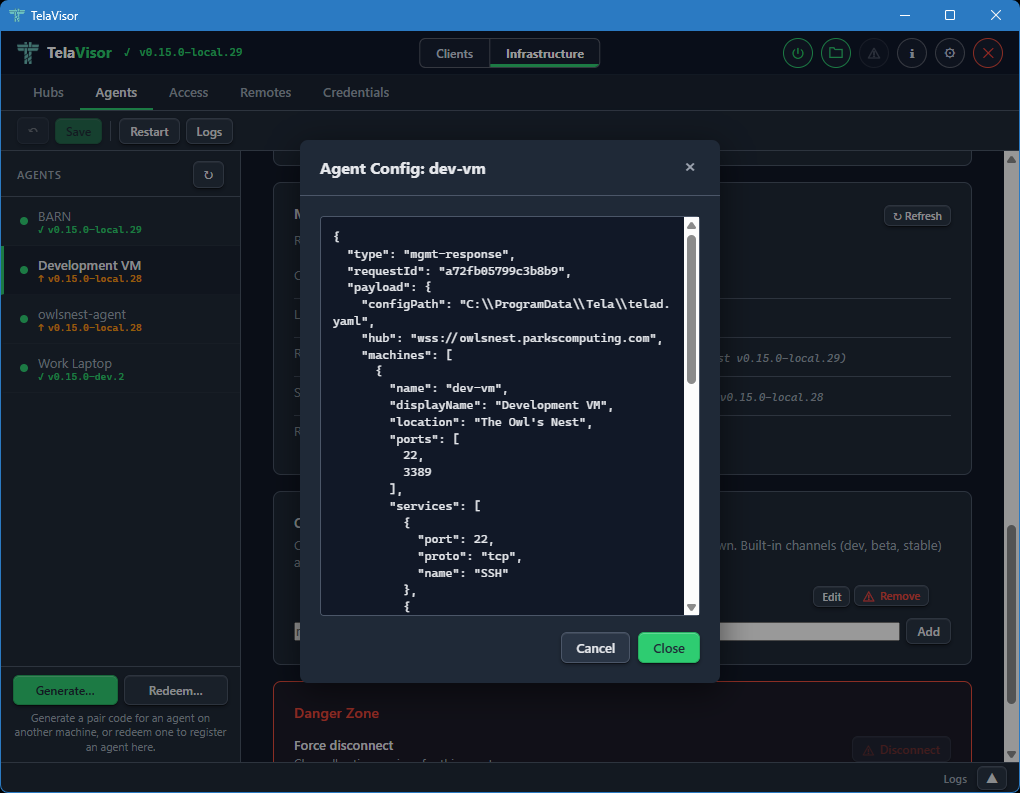

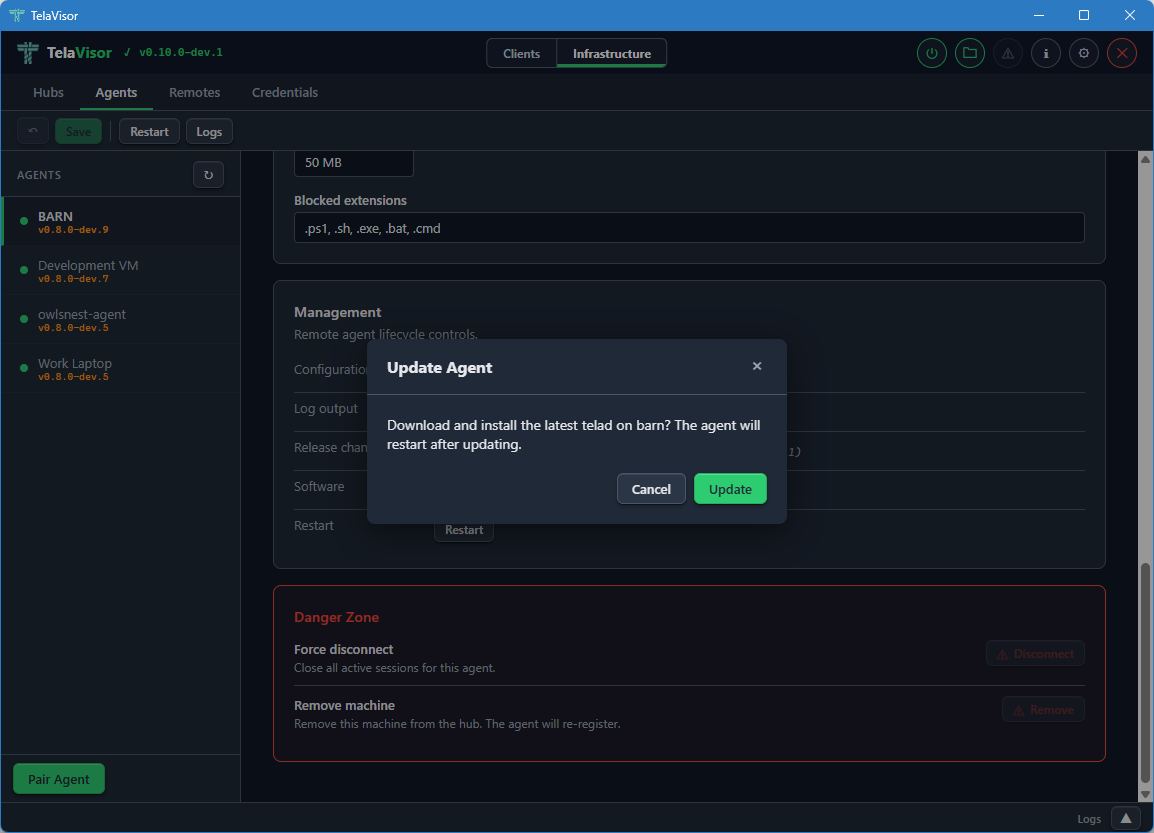

Agent

The agent resource lets you inspect and manage remote telad instances through the hub, without a direct connection to the agent machine.

# List registered agents

tela admin agent list -hub wss://hub.example.com

# Show an agent's configuration

tela admin agent config -machine barn -hub wss://hub.example.com

# Update an agent's configuration

tela admin agent set -machine barn -hub wss://hub.example.com '<json>'

# View agent logs

tela admin agent logs -machine barn -hub wss://hub.example.com

tela admin agent logs -machine barn -hub wss://hub.example.com -n 200

# Restart an agent

tela admin agent restart -machine barn -hub wss://hub.example.com

# Trigger a self-update

tela admin agent update -machine barn -hub wss://hub.example.com

tela admin agent update -machine barn -hub wss://hub.example.com -version v0.9.1

# Show the agent's current release channel

tela admin agent channel -machine barn -hub wss://hub.example.com

# Set the agent's release channel

tela admin agent channel -machine barn -hub wss://hub.example.com set stable

Agent management commands are forwarded through the hub to the agent and wait for a response. If the agent is offline or does not respond within 30 seconds, the command returns an error.

Hub

The hub resource manages the hub itself.

# Show hub status

tela admin hub status -hub wss://hub.example.com

# View hub logs

tela admin hub logs -hub wss://hub.example.com

tela admin hub logs -hub wss://hub.example.com -n 200

# Restart the hub

tela admin hub restart -hub wss://hub.example.com

# Trigger a self-update

tela admin hub update -hub wss://hub.example.com

tela admin hub update -hub wss://hub.example.com -version v0.9.1

# Show the current release channel

tela admin hub channel -hub wss://hub.example.com

# Set the release channel

tela admin hub channel set stable -hub wss://hub.example.com

Portals

Portals are external registries that list hubs for discovery. The portals resource manages which portals a hub is registered with.

# List registered portals

tela admin portals list -hub wss://hub.example.com

# Add a portal

tela admin portals add <name> -portal-url <url> -hub wss://hub.example.com

# Remove a portal

tela admin portals remove <name> -hub wss://hub.example.com

Portal changes take effect immediately. The hub begins syncing with a newly added portal without a restart.

Flag placement

All tela admin subcommands accept flags after positional arguments. Both of these are equivalent:

tela admin tokens add alice -hub wss://hub.example.com -role admin

tela admin tokens add -hub wss://hub.example.com -role admin alice

Hub web console

The hub ships with a built-in web console served at its HTTP address. Point a browser at http://hub.example.com:PORT/ (or https:// if TLS is configured) and the console loads automatically.

No separate installation is required. The console is embedded in the telahubd binary.

Sections

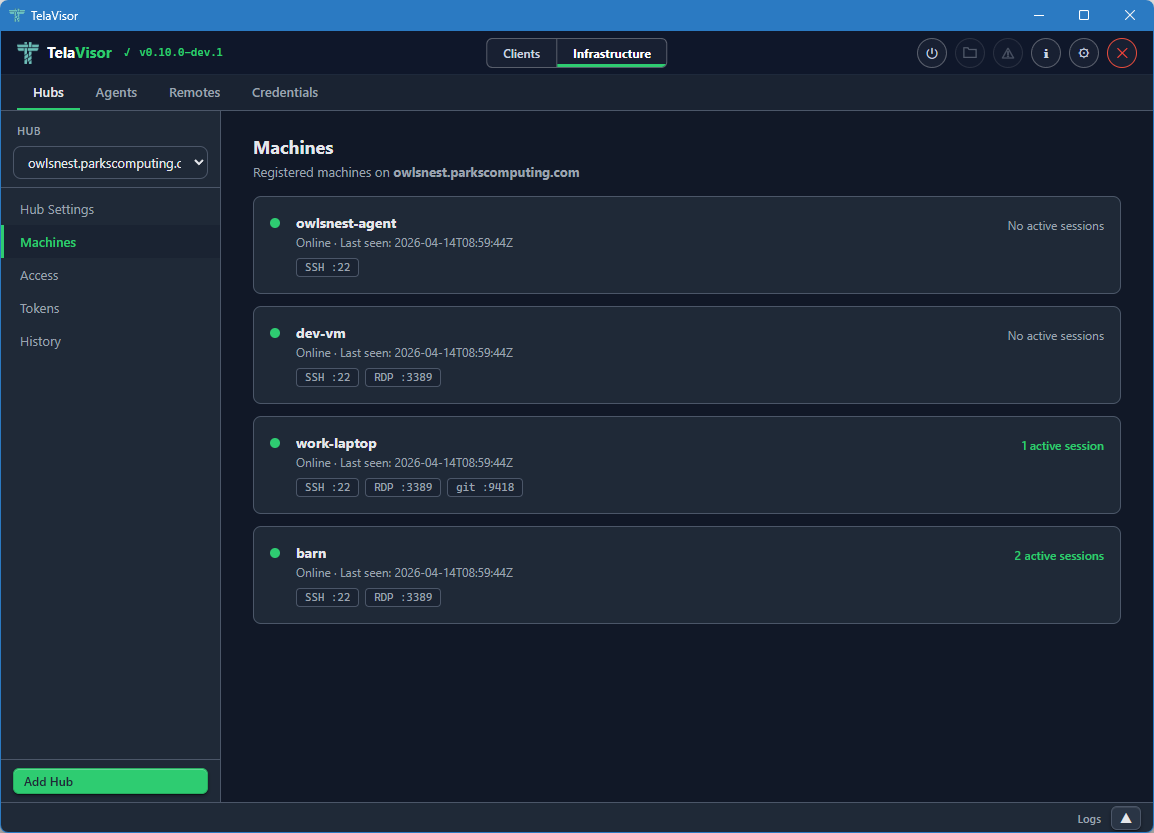

Machines

The Machines section lists every registered agent and its services. Each row shows the machine name, registered services (name and port), current status, and active session count.

Status indicators:

| Indicator | Meaning |

|---|---|

| Green dot | Online -- agent connected within the last 30 seconds |

| Yellow dot | Stale -- agent has not sent a keepalive recently |

| No dot | Offline |

Click the Refresh button to reload from the hub. The "last updated" timestamp shows when data was last fetched.

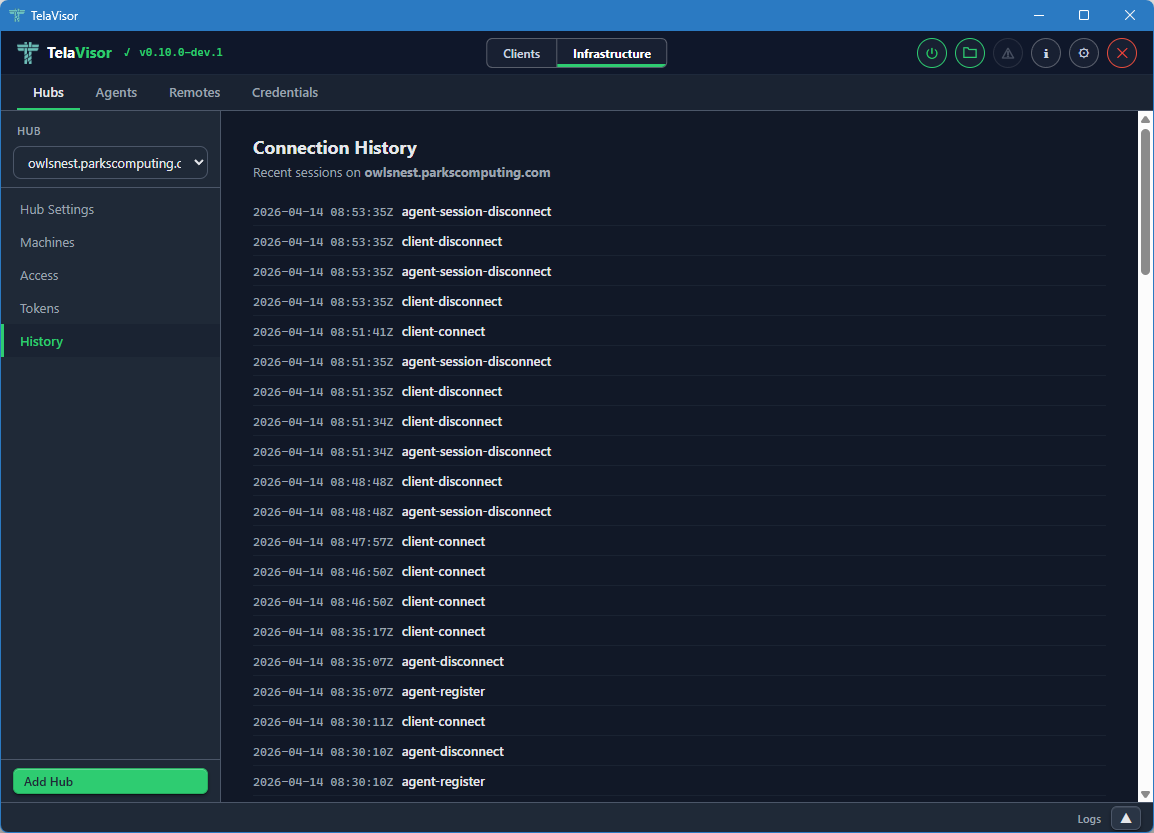

Recent Activity

The Recent Activity section shows the last 200 connection events: sessions opened, sessions closed, and agent registrations. Each entry shows the timestamp, event type, machine name, and client address.

Pairing (admin only)

Administrators see a Pairing section not visible to other users. It generates one-time pairing codes without requiring tela admin pair-code on the command line.

Fields:

| Field | Options | Description |

|---|---|---|

| Type | Connect, Register | Connect codes are for users; register codes are for new agents |

| Expiration | 10 minutes, 1 hour, 24 hours, 7 days | How long the code remains valid |

| Machine scope | Machine ID or * | Which machine(s) the code grants access to |

After clicking Generate Code, the console displays the short code and the redemption command to give to the recipient. The code is single-use and cannot be regenerated.

Download

When a stable or beta release has been published to the GitHub Release channel, the Download section appears with direct links to the tela client binary for each supported platform and architecture.

CLI Quick Reference

A brief reminder of the most common tela commands, for operators sharing hub access with users who are not yet familiar with the client.

Authentication

The hub injects a viewer token into the console page at load time. This token has the viewer role and allows read-only access to the Machines and Recent Activity data without any login step.

The Pairing section appears only when the browser presents a token with owner or admin role. You can authenticate at a higher level by appending ?token=<admin-token> to the console URL.

Theme

The console supports light, dark, and system-preference themes. The toggle is in the top navigation bar. The preference is stored in browser local storage.

When to use the console vs. the CLI

The console is convenient for checking machine status at a glance and for generating pairing codes without terminal access. For anything beyond those two tasks -- managing tokens, changing permissions, viewing agent configuration, or triggering updates -- use tela admin from a terminal.

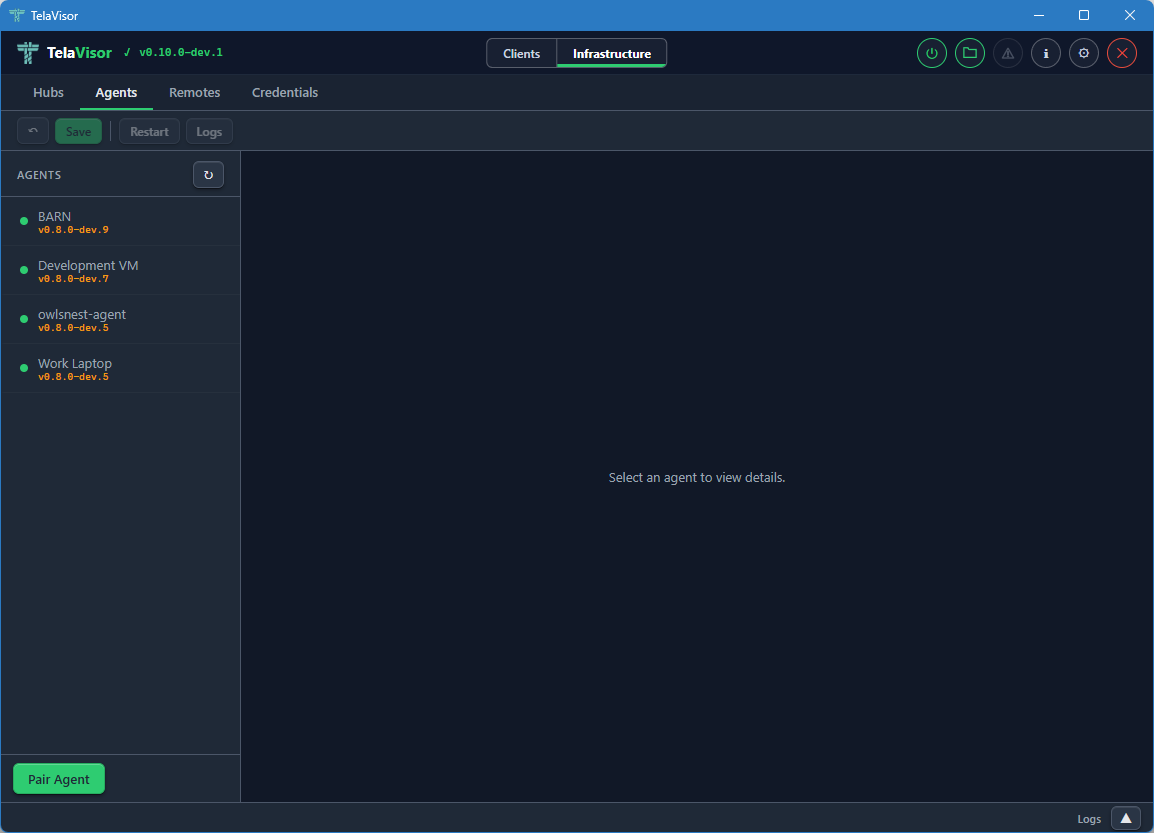

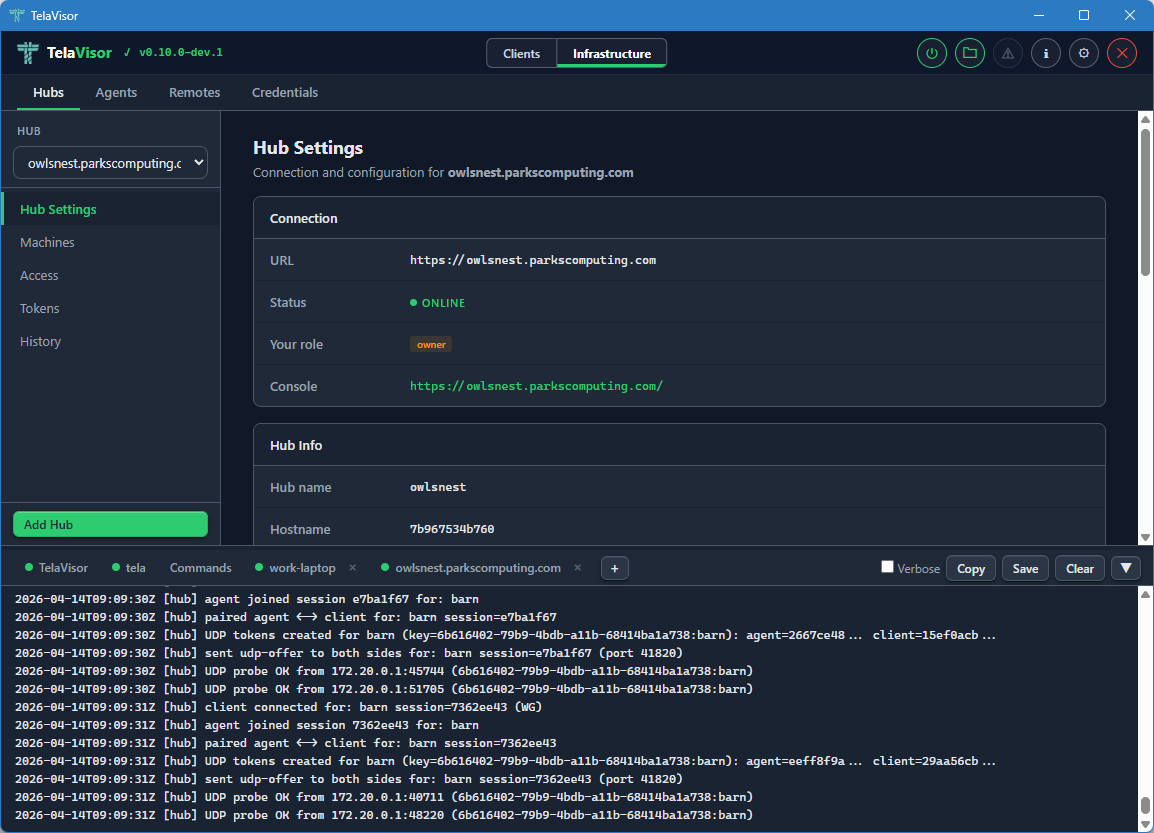

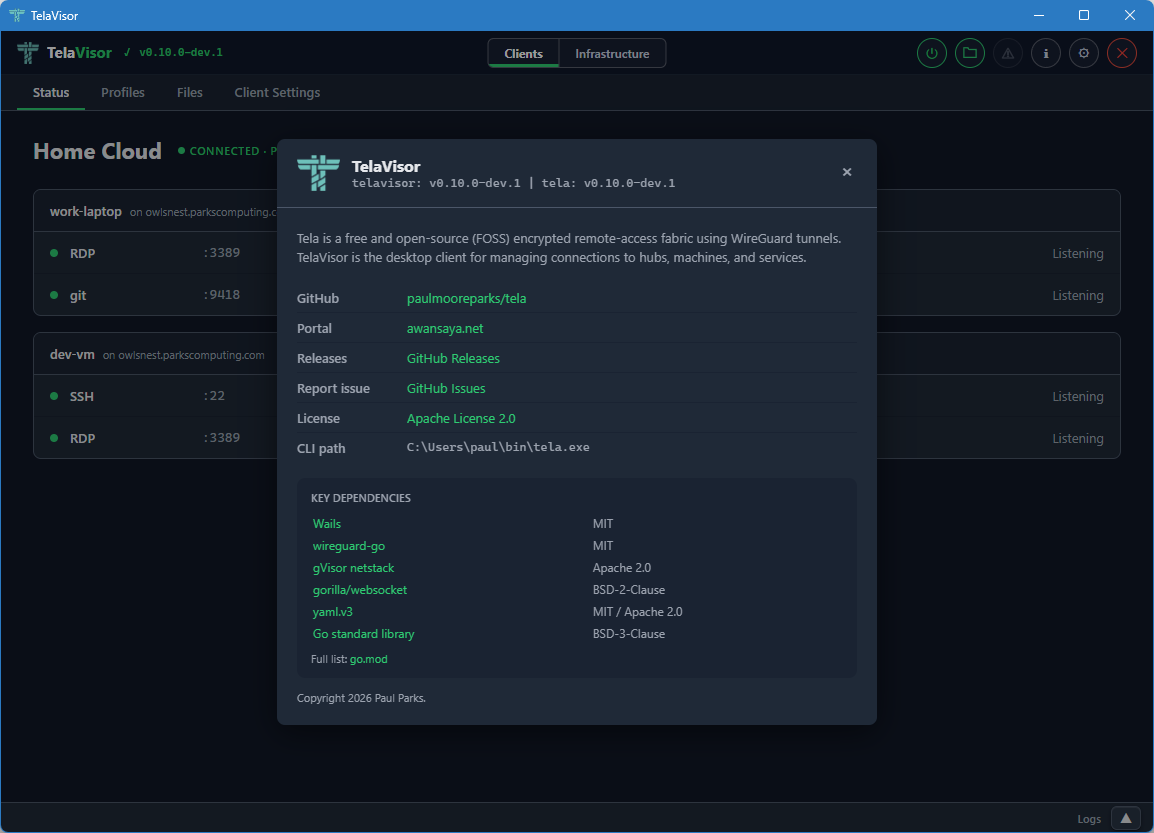

TelaVisor

TelaVisor is the desktop graphical interface for Tela. It wraps the tela

command-line tool in a window with menus, dialogs, panels, and a file

browser, so you can manage connections, hubs, agents, profiles, files, and

credentials without ever opening a terminal. It runs on Windows, Linux, and

macOS.

What TelaVisor is, and what it is not

TelaVisor manages the full life cycle of connecting to remote services through Tela hubs:

- Storing hub credentials. Add hubs by Uniform Resource Locator (URL)

and token, or use a one-time pairing code. Credentials are stored in

the same credential store that

tela loginuses, so the desktop client and the command line share the same set of authenticated hubs. - Selecting services. Browse machines registered on each hub, see which are online, and check the services you want to connect to.

- Connecting with one click. TelaVisor saves your selections as a

connection profile, launches

tela connect -profile, and monitors the process. - Monitoring tunnel status. The Status view shows each selected service with its remote port, local address, and current state. Status updates arrive in real time over tela's WebSocket control application programming interface (API).

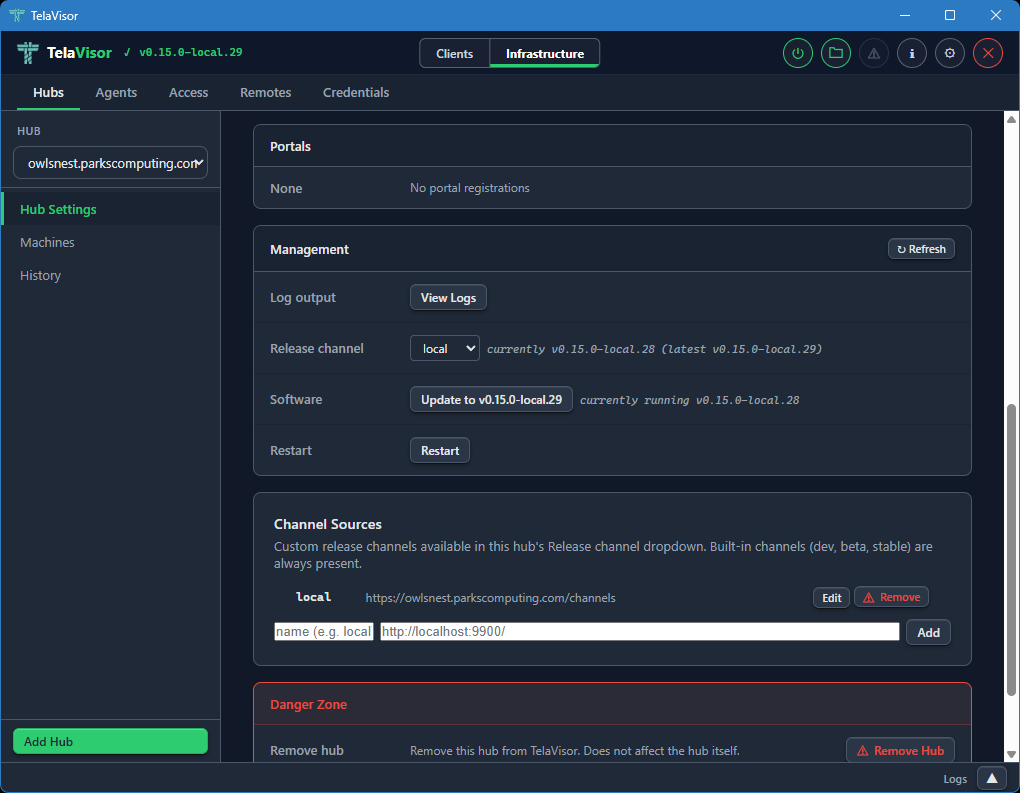

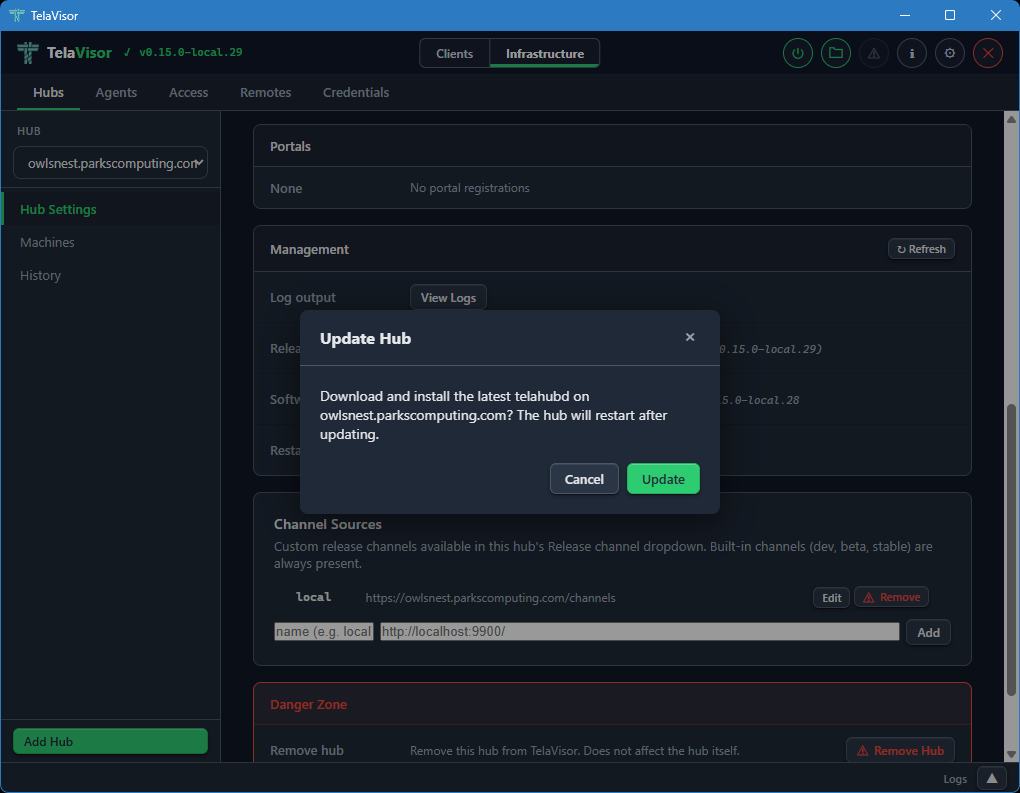

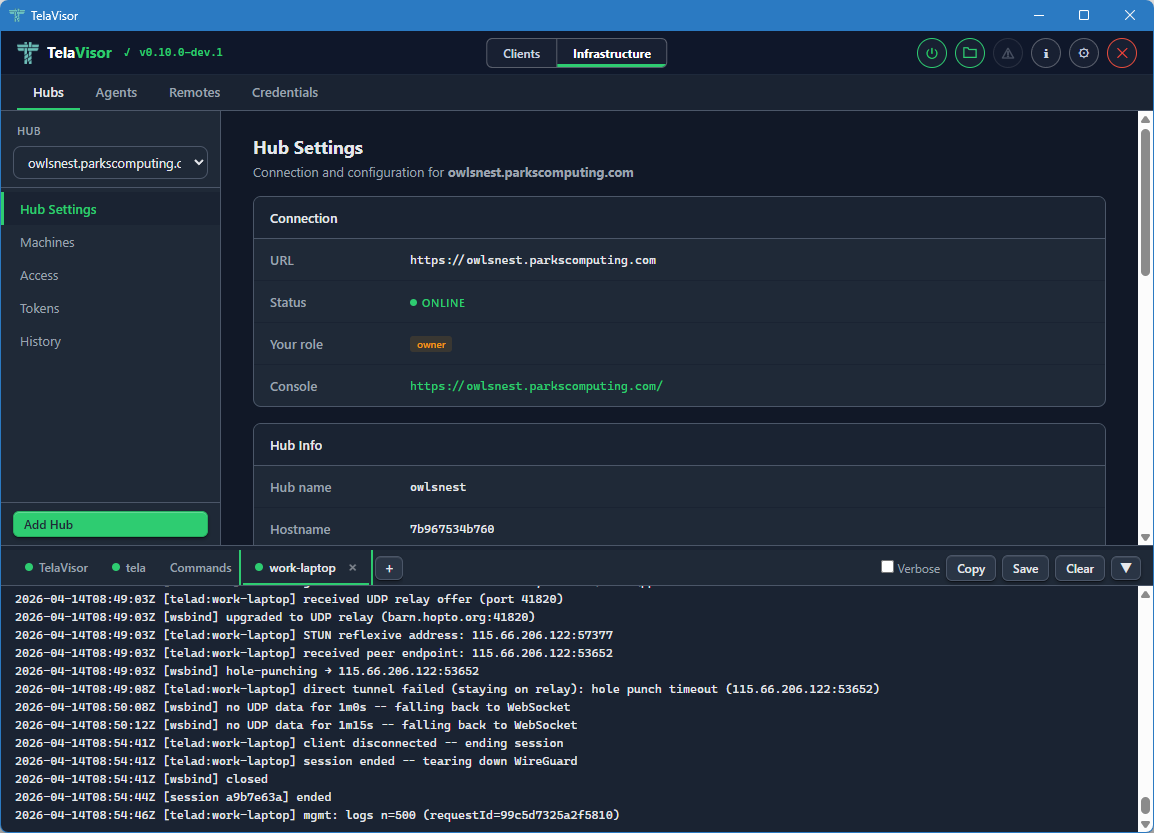

- Managing hubs. View hub settings, manage tokens, configure per-machine access, view connection history, generate pairing codes, view remote logs, and update or restart hub binaries from Infrastructure mode.

- Managing agents. View agent details, services, file share configuration, push configuration changes through the hub-mediated management protocol, view remote logs, and update or restart agent binaries from the Agents tab.

- Managing multiple profiles. Create, rename, delete, import, and

export profiles. Each profile is a standalone YAML file compatible

with

tela connect -profile. - Browsing remote files. The built-in file browser provides Explorer-style access to file shares on connected machines through the encrypted tunnel.

TelaVisor does not implement tunneling itself. The encrypted WireGuard

tunnel is built by the tela command-line process. TelaVisor is a control

surface around that process: it writes profile files, launches the binary,

talks to its local control API, and renders state. The

How TelaVisor works with tela section at

the end of this chapter explains the architecture.

TelaVisor is also the reference implementation of the Tela Design Language, the visual language shared across all Tela products. The top bar, the mode toggle, the tab bar, the toolbar separators, the icon buttons, the modals, and the color system that you see in TelaVisor are the canonical examples of TDL.

Installing and launching

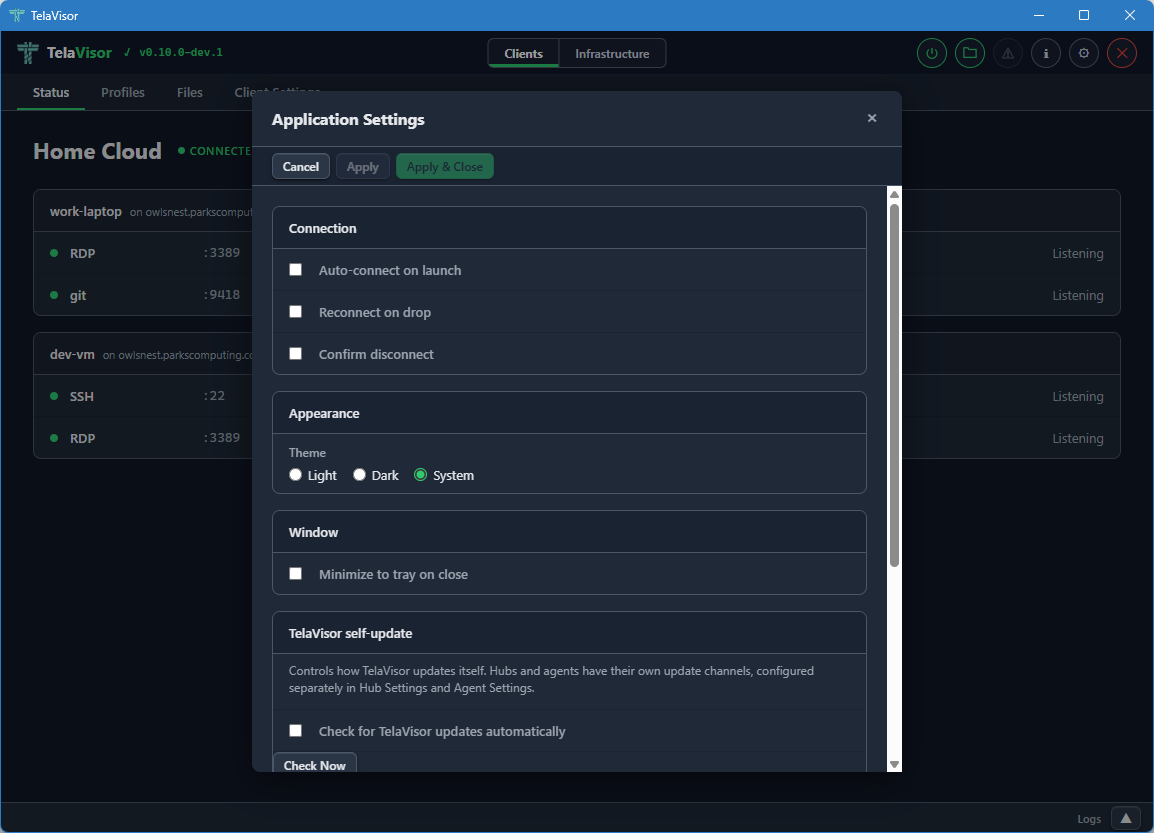

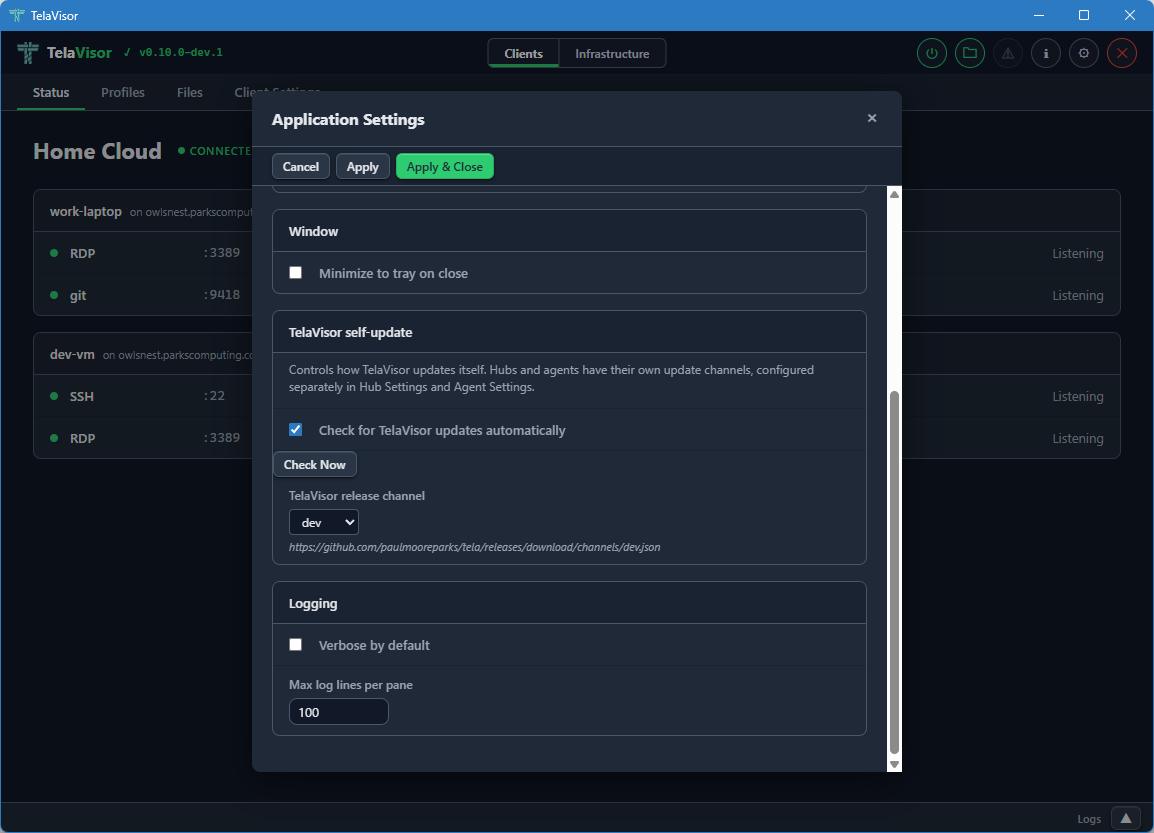

TelaVisor ships as a single-file native application for each supported platform. Download the appropriate build from your configured release channel and run it. There is no installer to navigate, no kernel driver to sign, no service to register unless you choose to install one. The application starts with a default profile pre-populated and the Status tab visible.

On first launch, TelaVisor's title bar shows the application name and version, the mode toggle in the center, and several icon buttons on the right side: a power button (the connection toggle), a file manager shortcut, an information button, an update warning indicator (only when an update is available), a settings gear, and a quit button. The window is resizable. Window position and size are saved on close and restored on the next launch.

The application supports light and dark themes. The default is the system preference, which you can override in Application Settings.

The two-mode layout

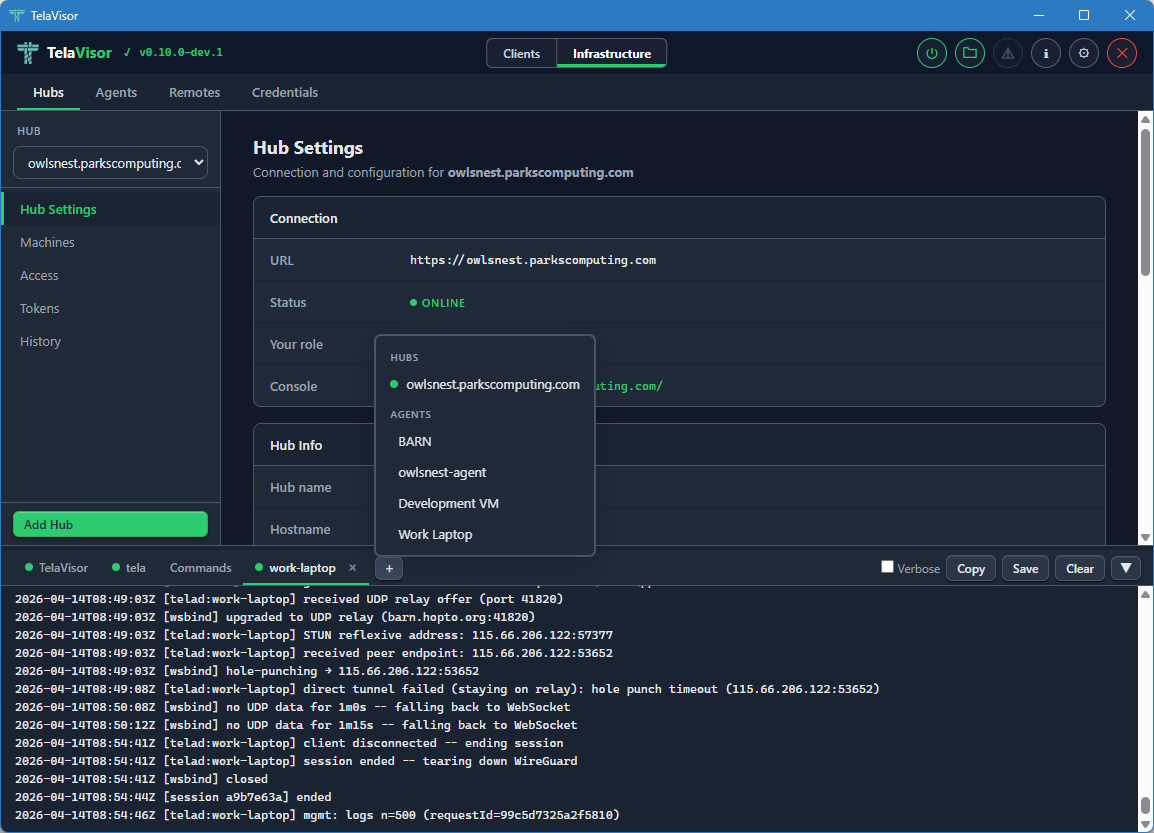

TelaVisor uses a two-mode layout. The mode toggle in the center of the title bar switches between Clients mode and Infrastructure mode. Each mode has its own tab bar and its own set of features.

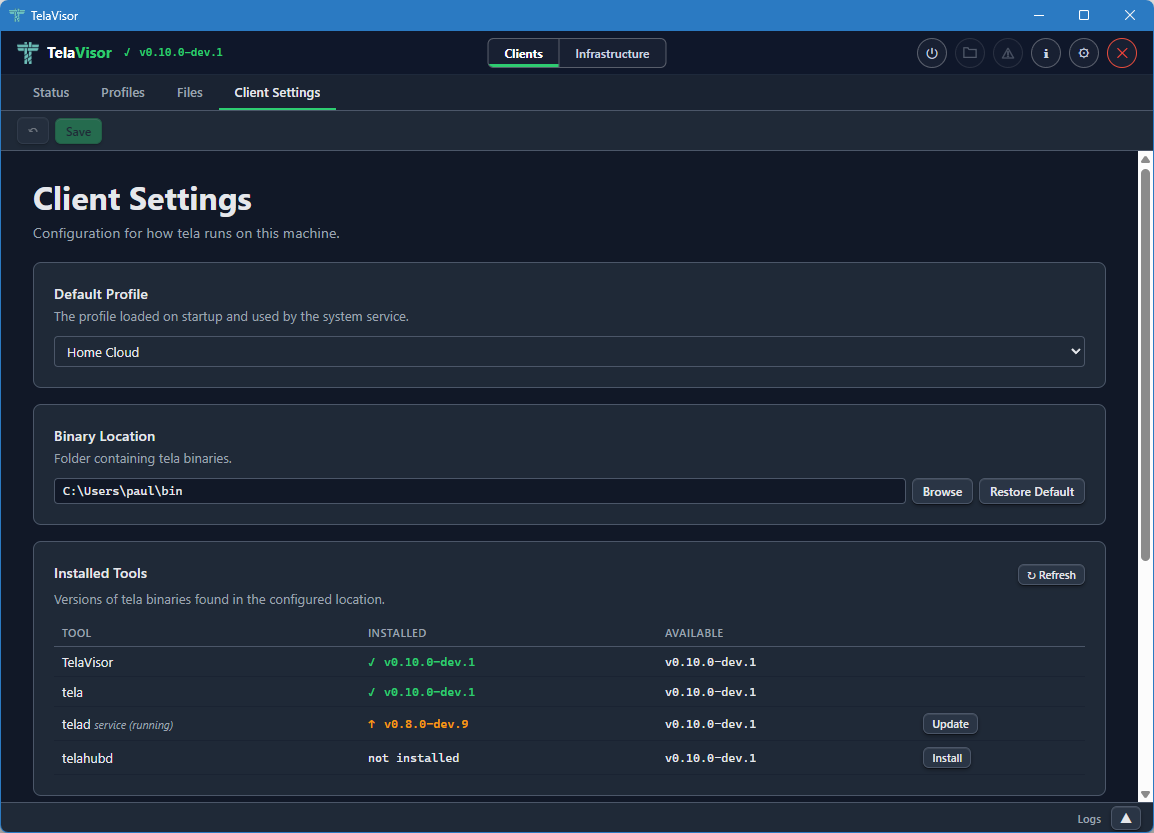

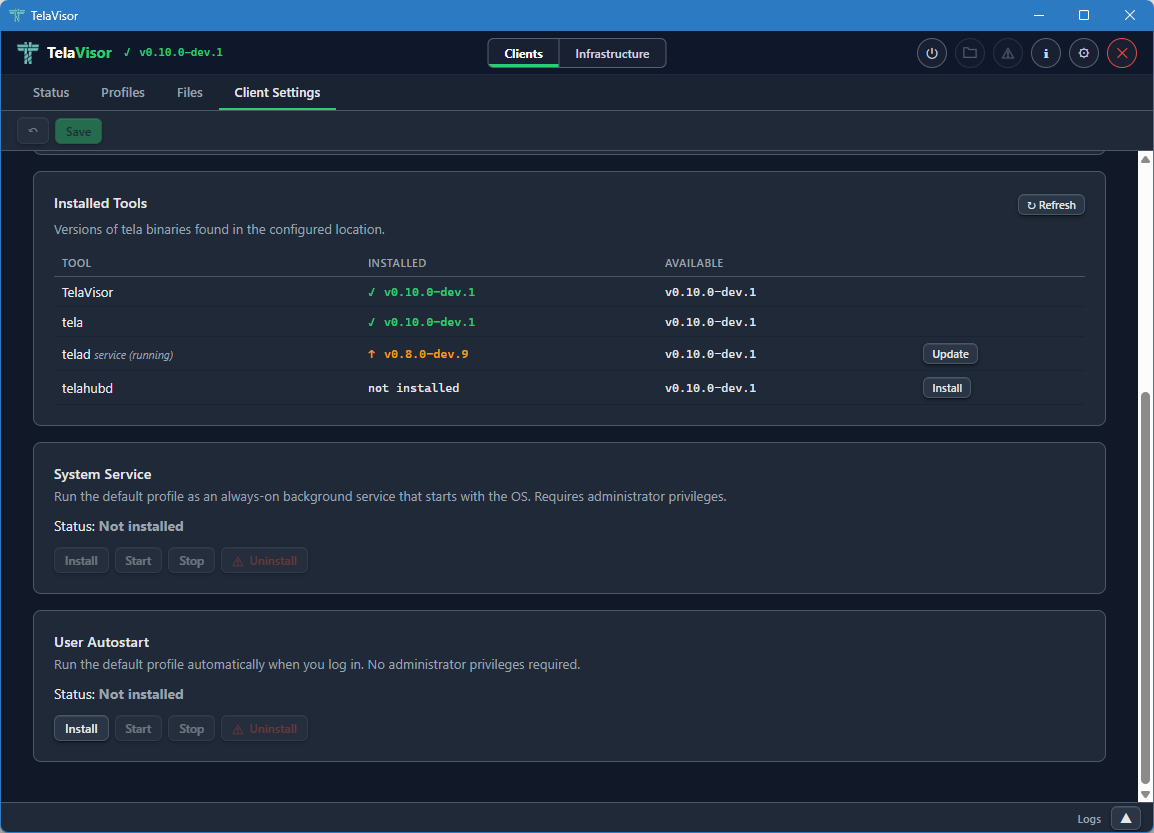

- Clients mode is for connecting to remote services. Its tabs are Status, Profiles, Files, and Client Settings. Read this mode as everything a user does to use a tunnel.

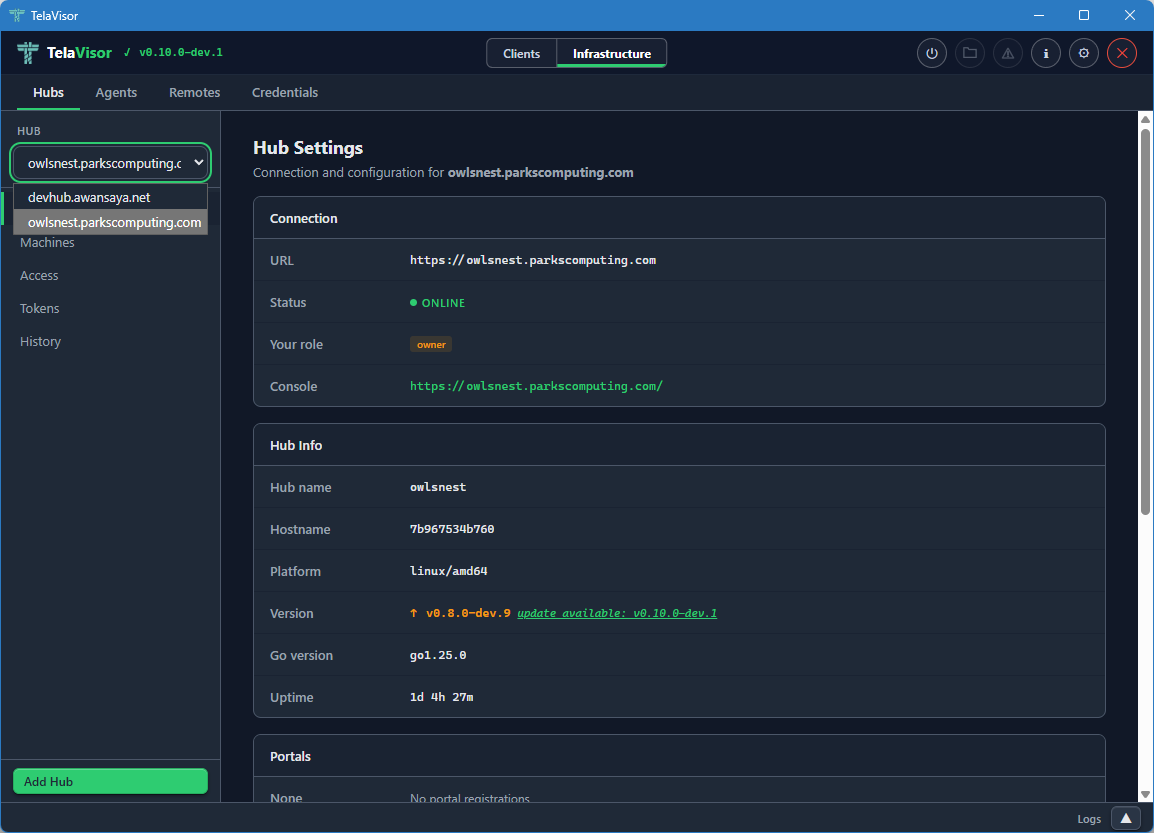

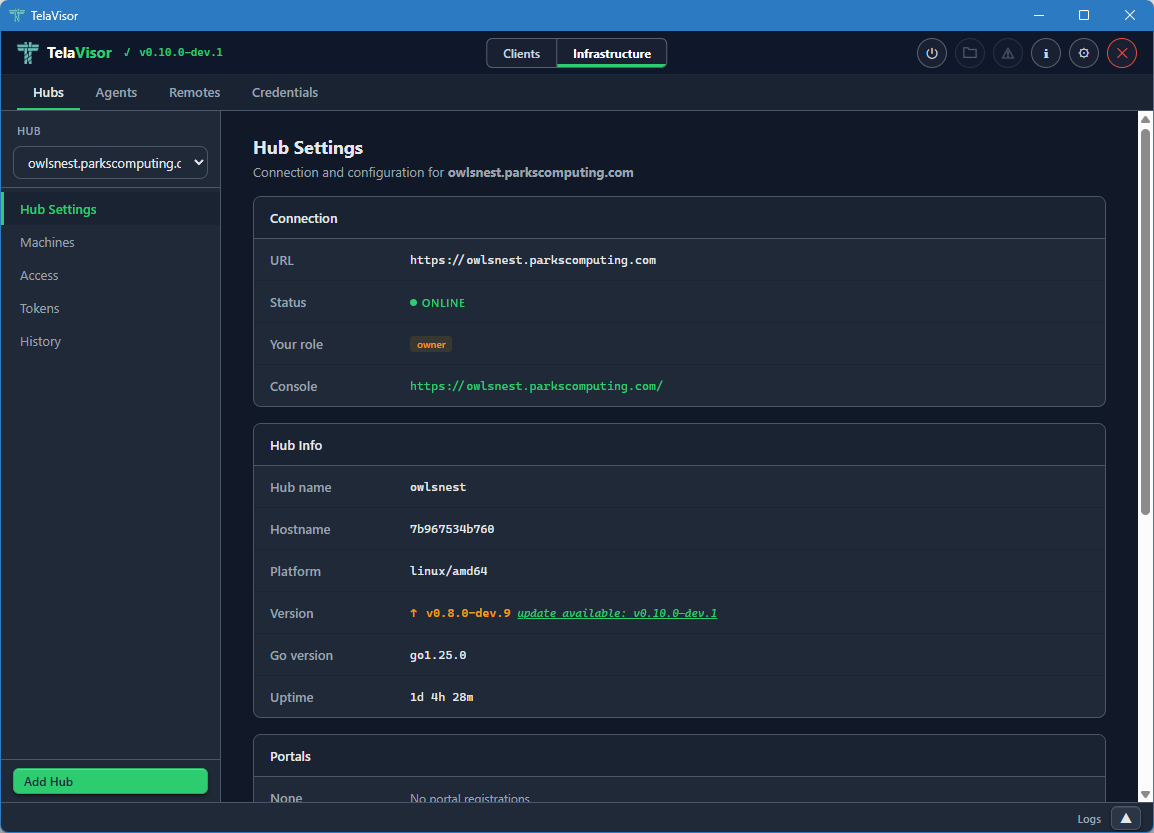

- Infrastructure mode is for administering the system that the tunnels run on. Its tabs are Hubs, Agents, Remotes, and Credentials. Read this mode as everything an operator does to keep tunnels working.

A persistent log panel sits at the bottom of the window across both modes. You can drag its top edge to resize it, or click the chevron to collapse it to a slim status bar. The Log panel section covers it in detail.

The two modes have different audiences but the same window. A user who only ever needs to make connections can stay in Clients mode and never visit Infrastructure mode. An operator who runs hubs and agents on behalf of others spends most of their time in Infrastructure mode. A power user moves between both freely.

Clients mode

Status

The Status tab is the page TelaVisor opens to. It is the page that answers the question am I connected, and to what?

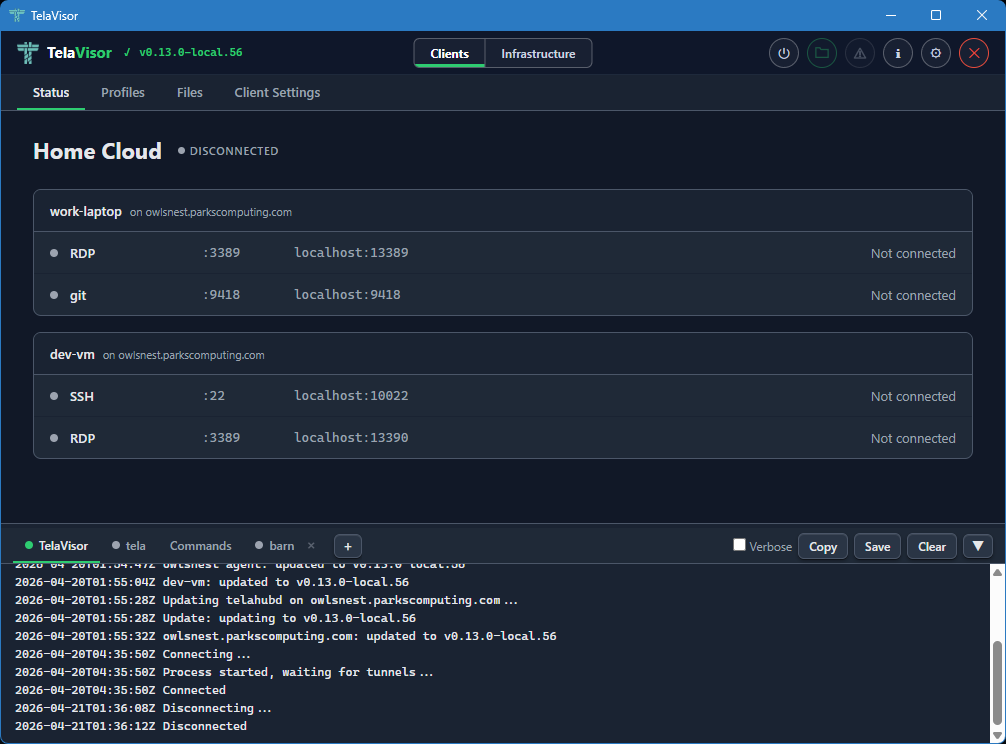

When TelaVisor is not connected, the Status page shows the active profile

name, a "Disconnected" badge, and a list of services that the profile is

configured to expose. Each service line shows a grey indicator dot, the

service name, the remote port on the target machine, the local address

that tela would bind to, and a status reading "Not connected."

The power button in the title bar is grey when disconnected. Clicking it starts the connection. The button turns amber and pulses while the tunnel is being established, then turns solid green when the tunnel is up.

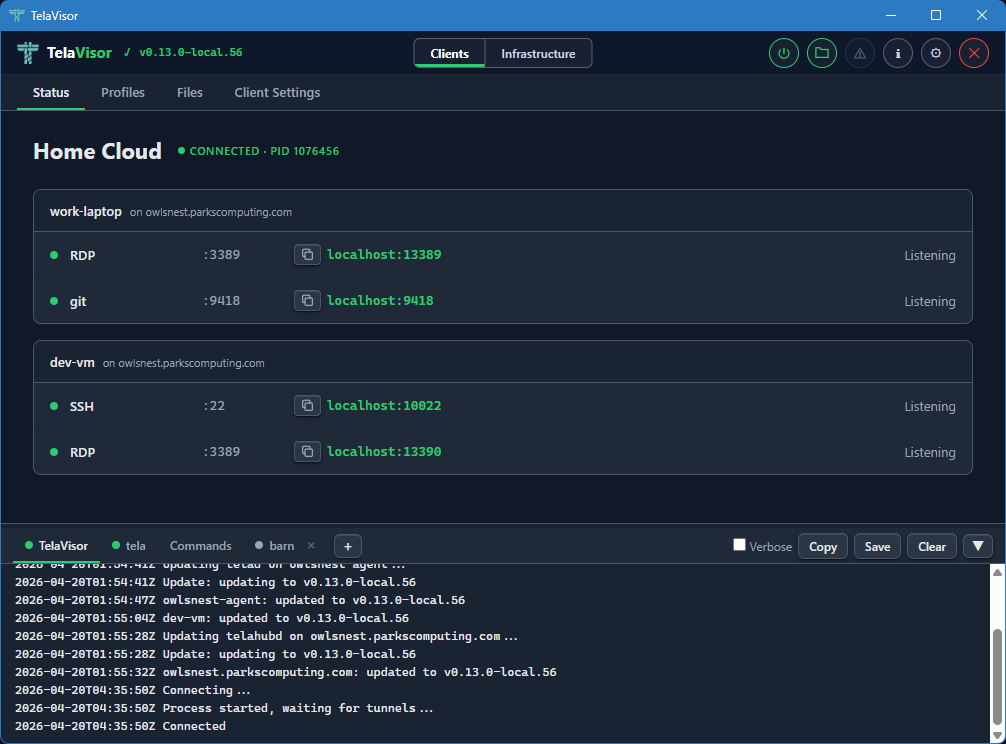

When the tunnel is up, the Status page changes shape. The "Disconnected" badge becomes a green "Connected" badge with the process identifier (PID) of the tela child process in parentheses, the power button turns green, and each service line updates to show its current state. A service that is bound and waiting for traffic reads "Listening." A service with an active session reads "Active" with the number of current connections. A service that failed to bind reads the bind error in red.

Each service indicator dot is grey when disconnected, green when listening

or active. The transitions between Listening and Active happen in real

time as you start and stop sessions against the local addresses from

outside TelaVisor. Open ssh user@localhost -p PORT (using the port shown

in the Status tab) against a Listening SSH service and the dot stays green;

the count next to "Active" goes up by one for the duration of the session

and back to "Listening" when the session ends.

The status updates arrive over a local WebSocket that the tela process

opens for TelaVisor to subscribe to. There is no polling. The values you

see on the Status page are pushed by tela the moment they change in the

tunnel.

To disconnect, click the power button in the title bar again, or quit TelaVisor. If you have Confirm disconnect enabled in Application Settings, TelaVisor asks for confirmation before tearing the tunnel down.

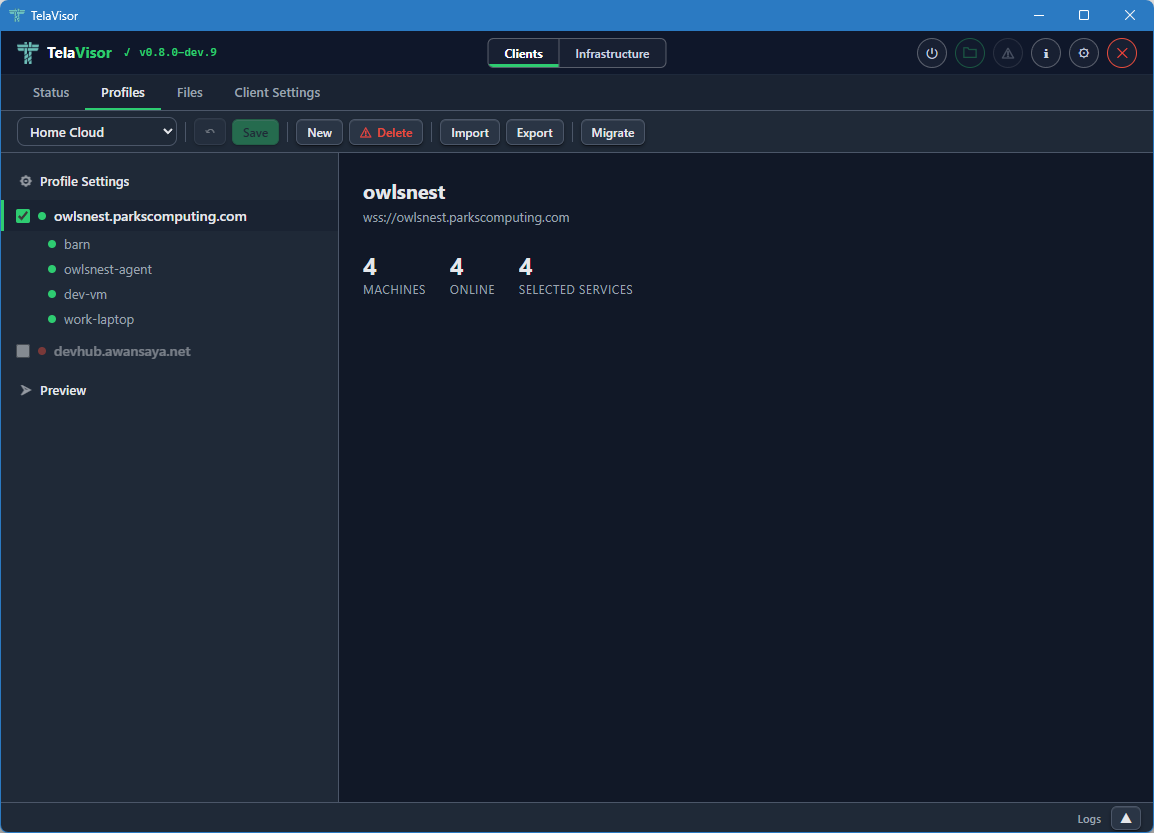

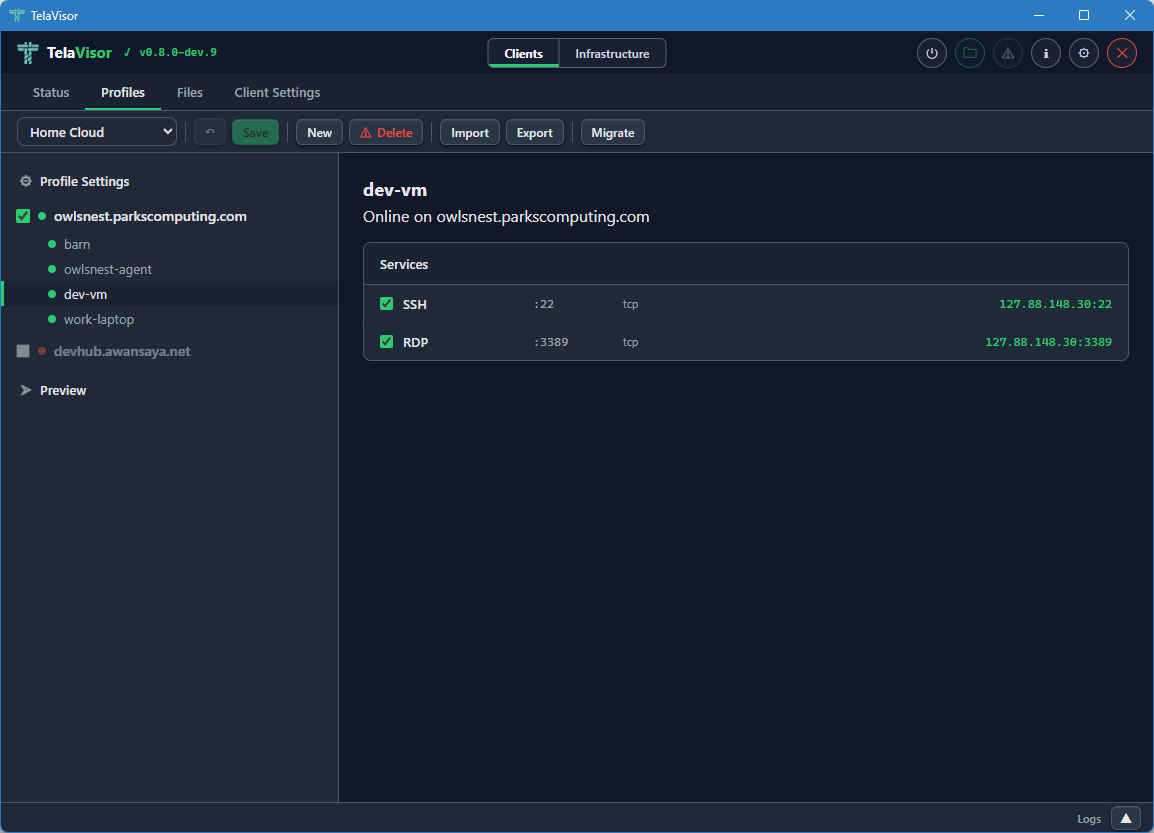

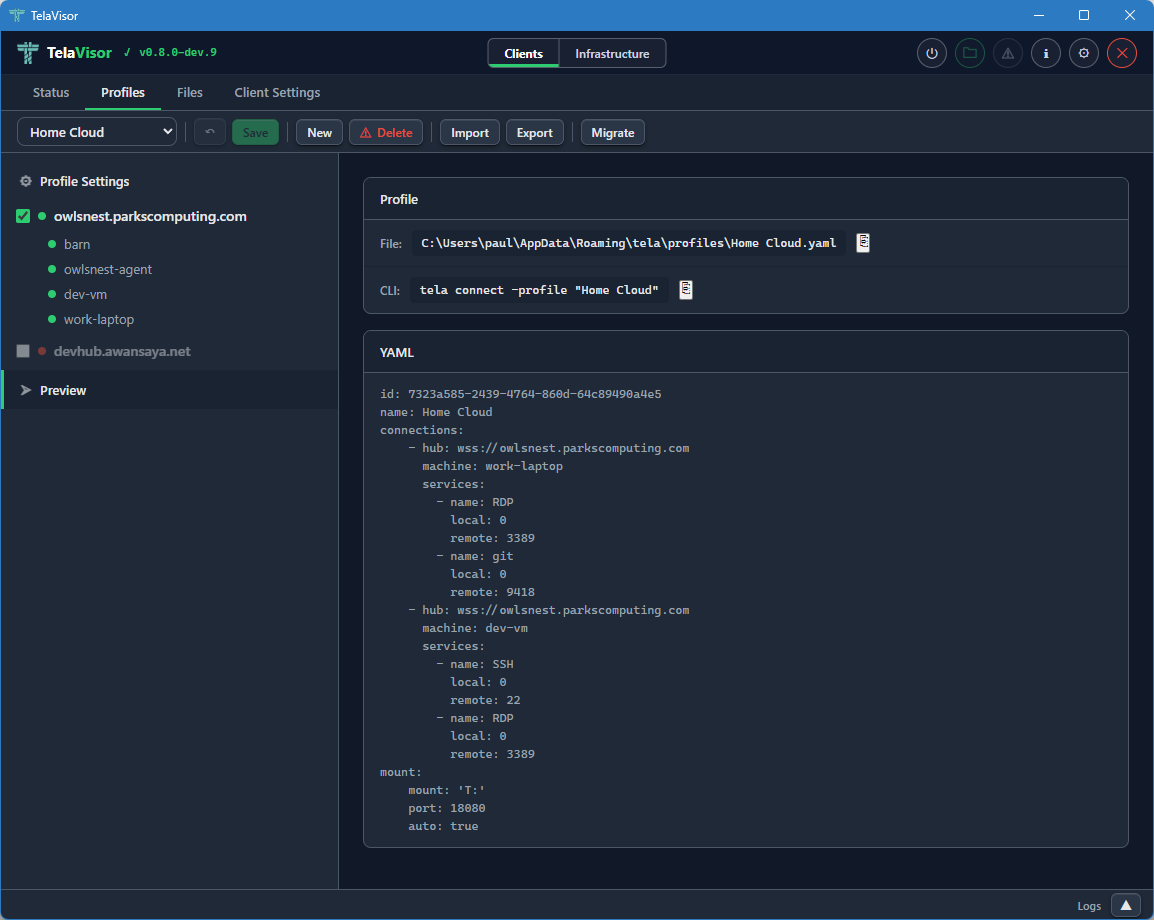

Profiles

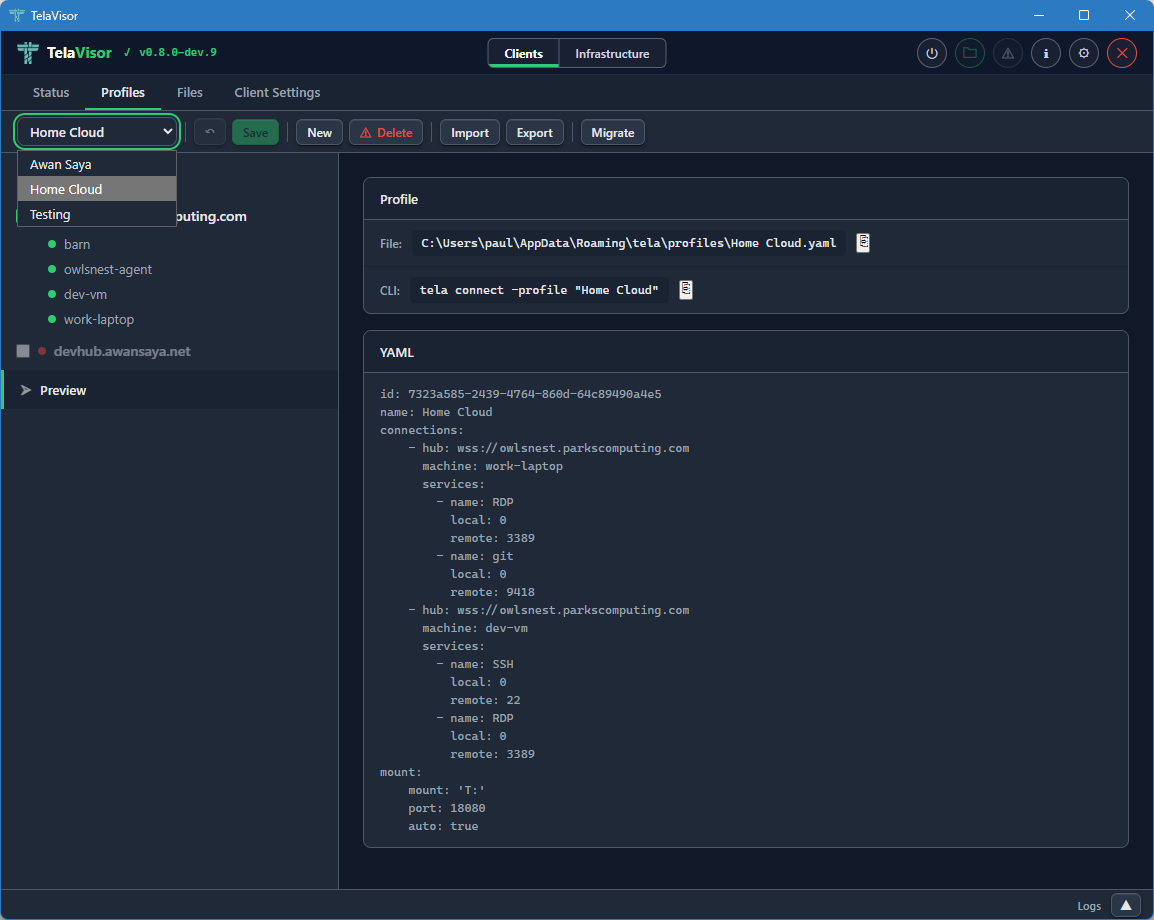

The Profiles tab is where you build connection profiles. A connection

profile is a YAML file that names one or more hubs, the machines on those

hubs you want to reach, and the services on those machines you want to

expose locally. The same YAML file format is consumed by tela connect -profile from the command line; the desktop application and the command

line use profiles interchangeably.

The Profiles tab has a toolbar across the top with the controls for managing the profile collection. From left to right, the toolbar contains:

- Profile dropdown. Selects the active profile. Clicking the dropdown opens a list of every profile in your profile directory. Selecting one loads it into the editor below and makes it the active profile for the Status, Files, and Client Settings tabs as well.

- Undo. Reverts unsaved changes to the most recently saved state of the profile.

- Save. Writes the current selections to the profile YAML file. The button is enabled only when there are unsaved changes.

- New. Creates a new empty profile. Prompts for the profile name and creates an empty YAML file in the profile directory.

- Delete. Deletes the active profile, with confirmation.

- Import. Imports a profile YAML file from a path on disk. Useful for receiving a profile from another machine or another user.

- Export. Saves the active profile to a chosen path on disk. Useful for sharing a profile or backing it up.

Below the toolbar, the page is split into a left sidebar and a right panel. The left sidebar lists three things: a Profile Settings entry, the hubs you have credentials for, and a Preview entry. Each hub has a checkbox that toggles whether the hub is included in the profile. Hubs that are checked expand to show the machines registered with them. Each machine has a coloured dot indicating its current online state.

The right panel changes based on what you have selected in the sidebar.

Profile Settings

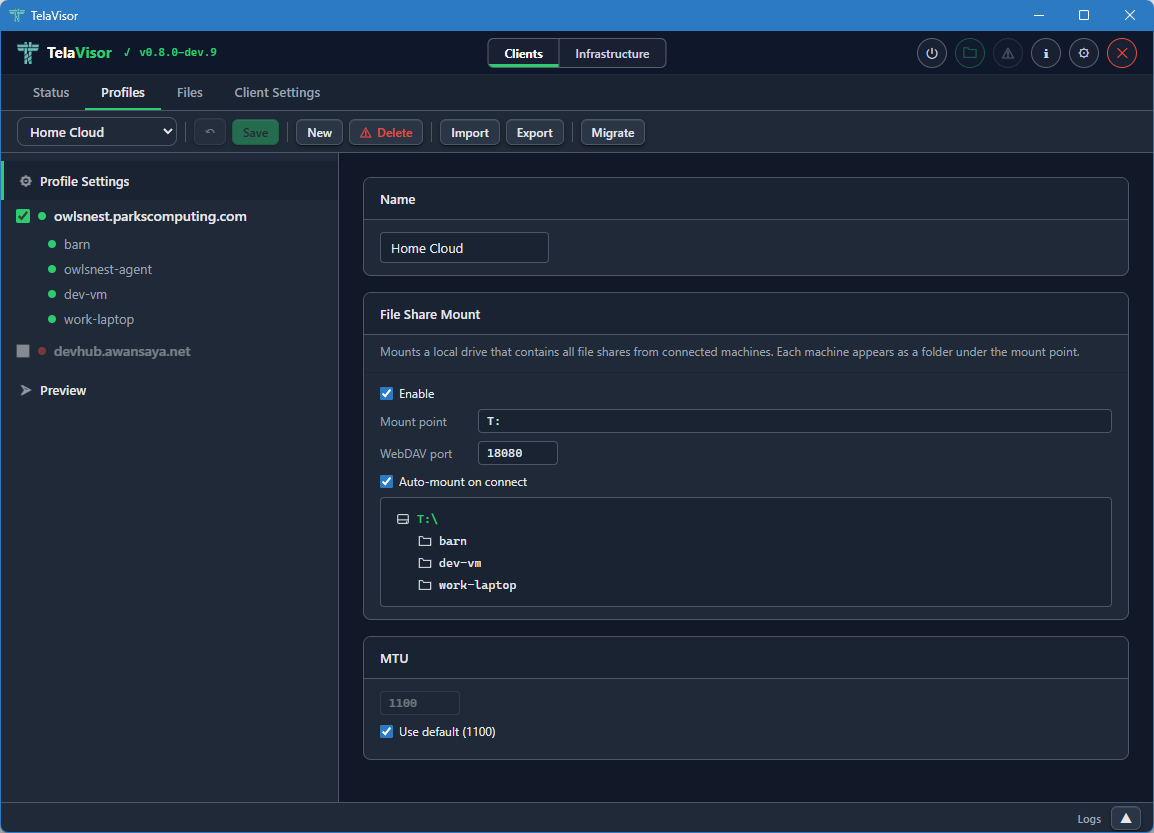

Selecting the Profile Settings row in the sidebar shows the profile-level configuration. This is the configuration that applies to the profile as a whole, not to any one machine.

The Profile Settings panel contains:

- Name. The display name of the profile. The name is the file name of the YAML file (minus the extension) and is what appears in the profile dropdown.

- File Share Mount. The Web Distributed Authoring and Versioning

(WebDAV) mount configuration. An Enable checkbox turns the mount

on or off. The Mount point field sets the local path or drive letter

to mount onto. The Port field sets the local TCP port the WebDAV

server listens on. The Auto-mount on connect checkbox mounts the

share automatically when the profile connects. Below these controls,

a live preview lists every machine in the profile that has file